Posted on Jul 28, 2023 in Featured |

The Editorial Board at the Journal of Mobile Technology in Medicine is proud to present Volume 9, Issue 1. Mobile technology in Medicine is a rapidly developing area, and we hope to continue accelerating research in the field. We look forward to your submissions for Issue 2.

Original Articles

001 “Hell, Yeah!” A Qualitative Study of Inpatient Attitudes towards Healthcare Professionals’ Use of Mobile Devices

Lori Giles-Smith BA (Hons), Andrea Spencer RN

011 Mind the gap – a study on mHealth based treatment process optimization in addiction medicine

Ulf Gerhardt, Thomas Gerlitzki, Ruediger Breitschwerdt, Oliver Thomas

029 A Pilot Study of Using a Personalised Video Message Delivered by Text Message to Increase Maternal Influenza Vaccine Uptake

Khai Lin Kong, Sushena Krishnaswamy, Ryan Begley, Paul Paddle, Michelle L. Giles

035 Comparison of Mid-Sternum and Center of Mass Accelerometry to Force Plate Measures for the Assessment of Standing Balance

Ryan Z. Amick, Nils A. Hakansson, David M. Jorgensen, Jeremy A. Patterson, Michael J. Jorgensen

043 Teleultrasound in Remote and Austere Environments

Reuben J. Chen

048 Pilot Study: Real-Time Monitoring and Medication Reminders in Glaucoma Patients

Alice H. Li, Yang Shou, Zhongqiu Li, Ann C. Fisher, Jeffrey L. Goldberg, Yang Sun, Wen-Shin Lee, Robert T. Chang

In keeping with our open-access principles, all articles are published both as full text and as PDF files for download. For your convenience, attached to this post is a PDF file containing the complete Volume 9, Issue 1, which can be easily downloaded and saved for viewing offline.

We look forward to hearing from readers in the comments section, and encourage authors to submit research to be considered for publication in this peer-reviewed medical journal.

Yours Sincerely,

Editorial Board

Journal of Mobile Technology in Medicine

Posted on Dec 4, 2018 in Original Article |

Designing a WIC App to Improve Health Behaviors: A Latent Class Analysis

Sylvia H. Crixell PhD, RD1, Brittany Reese Markides MS, RD2, Lesli Biediger-Friedman PhD, MPH, RD3, Amanda Reat MS, RD2, Nicholas Bishop PhD4

1Nutrition and Foods Professor, School of Family and Consumer Sciences, Texas State University, San Marcos, Texas; 2Nutrition and Foods Lecturer, School of Family and Consumer Sciences, Texas State University, San Marcos, Texas; 3Nutrition and Foods Assistant Professor, School of Family and Consumer Sciences, Texas State University, San Marcos, Texas; 4Family and Child Development Assistant Professor, School of Family and Consumer Sciences, Texas State University, San Marcos, Texas

Corresponding Author: scrixell@txstate.edu

Journal MTM 7:2:7–16, 2018

doi:10.7309/jmtm.7.2.2

Background: Smartphone apps have potential to effectively deliver health education and improve health behaviors among at-risk populations. To be successful, apps should include user input during stages of development. Previously, a prototype app designed for participants in the Texas Special Supplemental Nutrition Program for Women, Infants, and Children (WIC) was developed based on input from focus groups.

Aims: This research aimed to continue app design by soliciting user input via a survey from a state-wide sample.

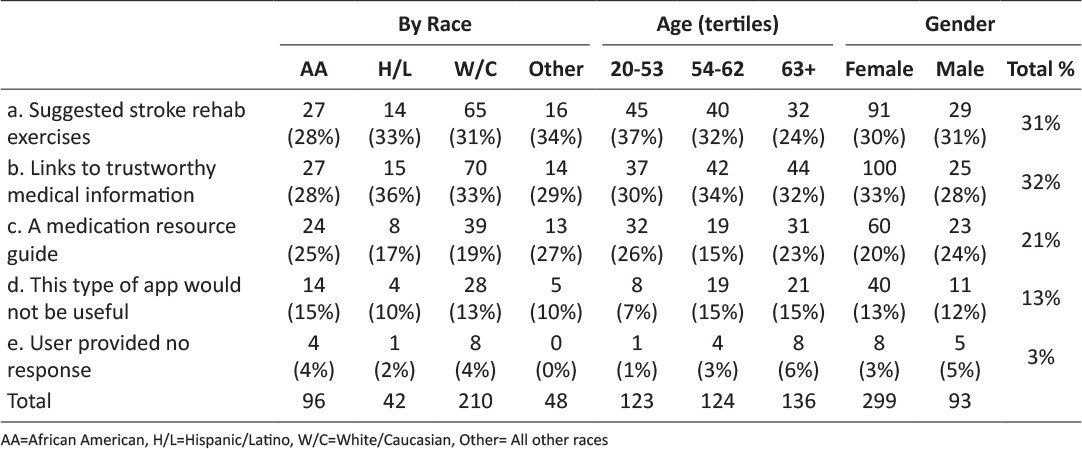

Methods: Texas WIC clients were asked about physical activity, healthy eating, and breastfeeding behaviors, stage of change regarding health behaviors, current use of health-related apps, and perceptions of app prototype features. Latent class analysis (n=942) was used to identify mutually exclusive groups based on the strength of participants’ agreement that prototype features would help them exercise more or consume more fruits and vegetables. Logistic regression examined health-related characteristics and sociodemographic differences between classes.

Results: Response to app prototype features was positive. A 2-class model best described latent classes. Class members that strongly agreed that prototype features would help them improve health behaviors were younger (< 35 years), not pregnant, already using health-related apps, and in the contemplation, preparation, or action stages of change regarding physical activity.

Conclusion: Refinement of the Texas WIC app should incorporate input from individuals who are pregnant, older than 35 years, or in pre-contemplation regarding physical activity. The iterative process of user-centered design applied in this research may serve as a useful framework for development of other public health apps.

Keywords: health promotion, technology, vegetables, smartphone, exercise

Introduction

In the United States, poverty affects women and children disproportionately, as they make up approximately 70% of the low-income population.1 Poverty is associated with deleterious health behaviors, such as consuming a low-quality diet and being physically inactive, particularly among vulnerable populations such as women and children.2 These behaviors contribute to serious health concerns, including poor birth outcomes, obesity, heart disease, type 2 diabetes, and certain cancers.2,3 Limited access to evidence-based information related to health, physical activity, nutrition, and infant care is a likely contributor to poor health behaviors and outcomes among low-income individuals and may be an important barrier that contributes to ongoing health disparities.2

Launched in 1972, the Special Supplemental Nutrition Program for Women, Infants, and Children (WIC) is a federal grant program that serves low-income pregnant, postpartum, and breastfeeding women, infants, and children up to age 5 who are at nutritional risk, with the goal of improving health behaviors and outcomes during critical periods of development.4 Annually, 8 million women, infants, and children are enrolled in WIC, with approximately 886 thousand participating in the state of Texas.5 WIC provides a number of resources, including vouchers for healthful foods to support pregnancy, lactation, and growth, and referrals to health care services.6 While they are enrolled in the program, WIC clients are expected to regularly participate in education that focuses on promoting healthy behaviors such as breastfeeding, exercise, and healthy eating (e.g. eating fruits and vegetables, cooking meals at home, eating meals as a family).6 Historically, WIC clinics have worked to impart evidence-based information related to health behaviors through education offered at clinics via face-to-face education. This education modality presents barriers to an already taxed population, which may lack reliable transportation to clinics and childcare during education sessions.7 In an attempt to mitigate these barriers, many WIC state agencies now offer online client education.8 However, reliable access to a computer with internet connectivity is not ubiquitous among Americans, and low-income smartphone owners are more likely to rely on their smartphones as a primary way of connecting to the internet.9 Indeed, Texas WIC clients have expressed a desire to receive education and services delivered via phone.7,10 Thus, smartphone apps may offer a viable alternative interface for providing innovative, accessible, and customizable health education to the WIC population. Research has supported the use of smartphones as a behavioral modification tool, with a number of applications developed to improve diet and physical activity.11

Smartphone apps have unique characteristics that may make them particularly ideal for delivering health education and supporting health behavior change. For example, smartphone apps can be used to access clients in real time, offer continual assessment of identified treatment goals, and deliver meaningful information and support to reinforce behavior change.11 Despite the promise of smartphone apps, individuals tend to discontinue app use after three months of downloading.12 Therefore, app developers should consider, a priori, the expressed needs of intended users. Indeed, all approaches to developing technological tools to improve health outcomes should engage people first.13 One approach to developing apps that prioritizes individuals is user-centered design (UCD), an evidence-based, iterative process prioritizing user input and engagement in designing products and services.14 Because UCD has been previously used to develop appealing smartphone apps that target health behaviors, such as physical activity,15 this process shows promise for developing an effective app for WIC clients. In 2014, based on strong interest among Texas WIC clients for delivery of nutrition education and services via their smartphones,16 the Texas Department of State Health Services WIC program commissioned us to develop a smartphone app prototype. To do this, we began the UCD process by conducting focus groups with a diverse sample of female WIC participants in south central Texas to explore current smartphone app use and preferences.17 Based on this initial user-input and tenets of the Social Cognitive Theory,18 we developed an app prototype, designed to provide customizable, interactive, and user-centered health education to the Texas WIC population.17 The prototype included features to support physical activity (i.e., activity calendar, activity tracker, exercise videos, resource library), healthy eating (i.e., meal calendar, healthy eating tracker, cooking videos, resource library, shopping list, fruit and vegetable game, farmer’s market locator), and breastfeeding (i.e., breastfeeding timer, growth chart, live assistant, resource library).17

The aim of this research was to continue the UCD process of developing an app for Texas WIC clients by disseminating a statewide survey seeking input regarding the app prototype features. Analysis of clients’ perceptions of prototype features designed to support physical activity and healthy eating are included in this report. Our approach was to use latent class analysis to identify subgroups of respondents based on the extent to which they agreed that the features would help them increase physical activity and intake of fruits and vegetables, and logistic regression to examine how membership in the latent classes were associated with sociodemographic and health-related characteristics. Recommendations for continued user-centered design of the WIC app were informed by characteristics of respondents in latent classes.

Methods

Sample

The survey was posted on the Texas WIC website from September 9, 2014 through November 6, 2014. Clients visiting the website were greeted with a pop-up window presenting an offer to take the survey in English or Spanish. Additionally, clinics in central Texas who had access to client email addresses sent invitations to clients to take the survey. Of the 606 emails sent, 88 addresses were invalid, 102 began taking the survey, and 63 finished. Overall, 1,019 WIC clients completed the survey. Participants who were younger than 18 (n=50), male (n=14), and had an implausible reported height (shorter than 4 feet or taller than 7 feet, n=27)19 were removed from the analytic sample, leaving a total sample of 942 respondents. The Institutional Review Boards of Texas State University and the Texas Department of State Health Services approved this study.

Survey

The survey, developed in English in collaboration with Texas WIC staff, included approximately 130 questions, depending on responses to logic-driven branches. To develop a version of the client survey in Spanish, the English survey was translated to Spanish, back-translated, and discrepancies were reconciled. The survey was implemented using Qualtrics software (2014, Provo, UT). The welcome page briefly described the survey, provided assurances of privacy, and described incentives for survey completion, which included credit for taking a WIC nutrition class and receiving a t-shirt. After giving informed consent, participants were asked if they owned a smartphone. Those who responded with ‘no’ were routed to a thank you page and the survey was discontinued.

The survey was divided into 3 major sections corresponding to health behaviors addressed by the WIC app prototype, including physical activity, healthy eating, and breastfeeding, followed by a set of demographics questions. Each health behavior section asked about current health practices, stage of change, facilitators and barriers to performing the health behavior, belief that app features would help to improve health behaviors, and current use of apps regarding that health behavior. Facilitators and barriers to health behaviors were drawn from focus groups held during the initial phase of the UCD of this prototype app.17 The current study is an analysis of participant response to the physical activity and healthy eating features and does not include breastfeeding.

Current practices regarding physical activity were measured using the Godin leisure-time exercise questionnaire, which creates a physical activity score based on questions about intensity and duration of exercise.20 This score classifies participant activity as insufficiently active, moderately active, or active. For analysis, categories were collapsed into a binary variable (0 = insufficient activity, 1 = moderately active or active). Stage of change for physical activity behaviors was assessed using a 4-question system adapted from Wolf et al. (1 = pre-contemplation, 2 = contemplation, 3 = preparation, 4 = action).21 Survey respondents were asked to indicate on a 5-item Likert scale to what extent they agreed that specific barriers and facilitators to physical activity applied to them personally (1 = strongly disagree, 2 = disagree, 3 = neither agree nor disagree, 4 = agree, 5 = strongly agree); these data were not included in this analysis. Finally, after each of the four app prototype features addressing exercise was displayed (an activity calendar, activity tracker, exercise videos, and resource library), clients were asked whether they agreed that the feature would help them exercise more often (1 = strongly disagree, 2 = disagree, 3 = neither agree nor disagree, 4 = agree, 5 = strongly agree).

Intake of fruits and vegetables was used as an indicator of healthy eating practices and was measured using a brief screener employed by Wolf et al. (fruit and vegetable servings consumed on the previous day, excluding servings of white potatoes, were summed for the final count and included in analysis as a continuous variable).21 Participants were also asked how many family meals they had each week, which was included as a continuous variable. Assessments of stage of change and whether the seven prototype features (a meal calendar, healthy eating tracker, cooking videos, a resource library, shopping list, fruit and vegetable game, and a farmer’s market locator) that addressed healthy eating would help them eat more fruits and vegetables were conducted in the same manner as described for physical activity.

The survey included questions about demographic characteristics. Household size was measured as a continuous variable. Age of participants (0 = 35 years or older, 1 = younger than 35 years), education (0 = high school or less, 1 = post-secondary education), race/ethnicity (White = reference, Black, Hispanic, Other), language used to complete the survey (0 = English, 1 = Spanish), employment status (0 = unemployed, 1 = employed), location of residence (0 = urban, 1 = rural), and body mass index (BMI; < 18.5 = underweight, 18.5 – 24.9 = normal weight, 25 – 29.9 = overweight, > 30 = obese) were coded as categorical variables. Due to few participants having a BMI identifying them as underweight, participants identified as underweight and normal weight were combined and used as the BMI reference group. BMI was not calculated for women who were pregnant (1 = pregnant, 0 = not pregnant). Food security was assessed with the U.S. Household Food Security Survey Module: Six-Item Short Form.22 Food security status was coded as a dichotomous variable (0 = very low or low food security, 1 = marginal or high food security). Current use of physical activity or healthy eating apps were coded as dichotomous variables (0 = never or almost never use, 1 = sometimes or daily use).

Statistical analyses

Latent class analysis, a form of mixture modeling allowing for the classification of unobserved heterogeneity in responses to multiple variables, was used to identify homogenous, mutually exclusive groups of WIC clients based on the extent to which they agreed that prototype features would help them exercise more often or eat more fruits and vegetables.23 To identify the number of classes that best represented the underlying response groups, a series of model fit tests were conducted starting with a single-class model. Model fit indices, including Akaike Information Criterion (AIC), Bayesian Information Criterion (BIC), and sample-size adjusted AIC (SSA-AIC), were used to determine whether the inclusion of each additional class provided improved model fit. Model entropy, representing the accuracy of assigning individuals to classes, was also considered. Finally, the Vuong-Lo-Mendell-Ruben (VLMR) likelihood ratio provided a statistical test of whether the estimated model significantly improved model fit compared to a model with one less class. Once the optimal number of latent classes was determined, logistic regression was used to examine differences between classes based on sociodemographic and health-related characteristics. The latent class analysis and logistic regression was conducted with Mplus 7.324 using maximum likelihood estimation with robust standard errors, providing treatment of missing data with maximum likelihood and estimation of standard errors robust to non-normality. All other analyses were conducted using IBM SPSS Statistics for Windows, version 24 (IBM Corp., Armonk, N.Y., USA).

Results

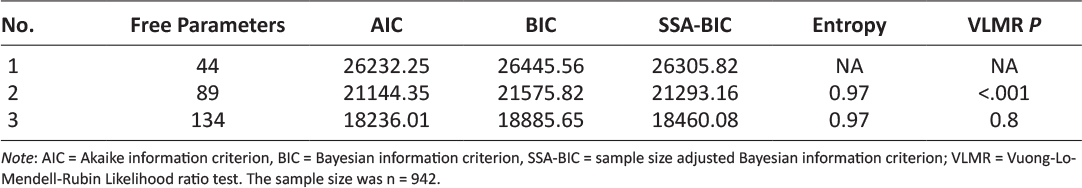

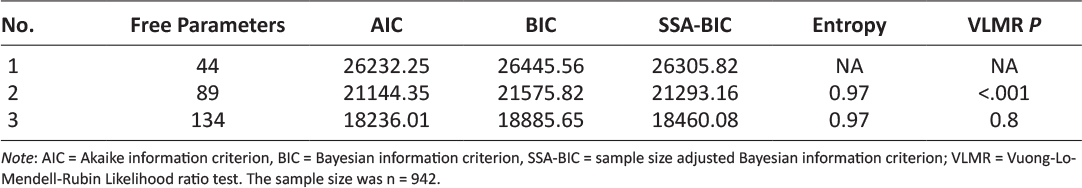

Table 1 presents model fit indices used to identify the optimal number of latent classes based on WIC participants’ responses to the survey questions “this app feature would help me exercise more/eat more fruits and vegetables.” Compared to the 1-class model, the 2-class model had improved AIC, BIC, and SSA-BIC model fit indices; the rate of model fit improvement decreased with the 3-class model. The VLMR test indicated that the 2-class model was a significant improvement on the 1-class model (p < .001), but the 3-class model was not a significantly better fit than the 2-class model (p = 0.8). Thus, based on model fit tests and the necessity of parsimony, the 2-class model was identified as the best description of latent classes.

Table 1: Goodness of fit indices for determining number of latent classes among Texas WIC survey respondents.

Class 1 (strongly agree; 32.9% of the sample) was identified as the group that strongly agreed that app features would help them improve targeted health behaviors; those in class 2 (neutral, agree; 64.8% of the sample) agreed or were neutral regarding whether the app features would help improve health behaviors. Figure 1 shows the distribution of responses to questions asking if using the app features would help respondents improve targeted health behaviors.

Figure 1: WIC clients’ agreement that app features would help improve targeted health behaviors. a) Depicts Class 1 (strongly agree) and Class 2 (neutral, agree) feedback regarding physical activity features. b) Depicts Class 1 (strongly agree) and Class 2 (neutral, agree) feedback regarding healthy eating features.

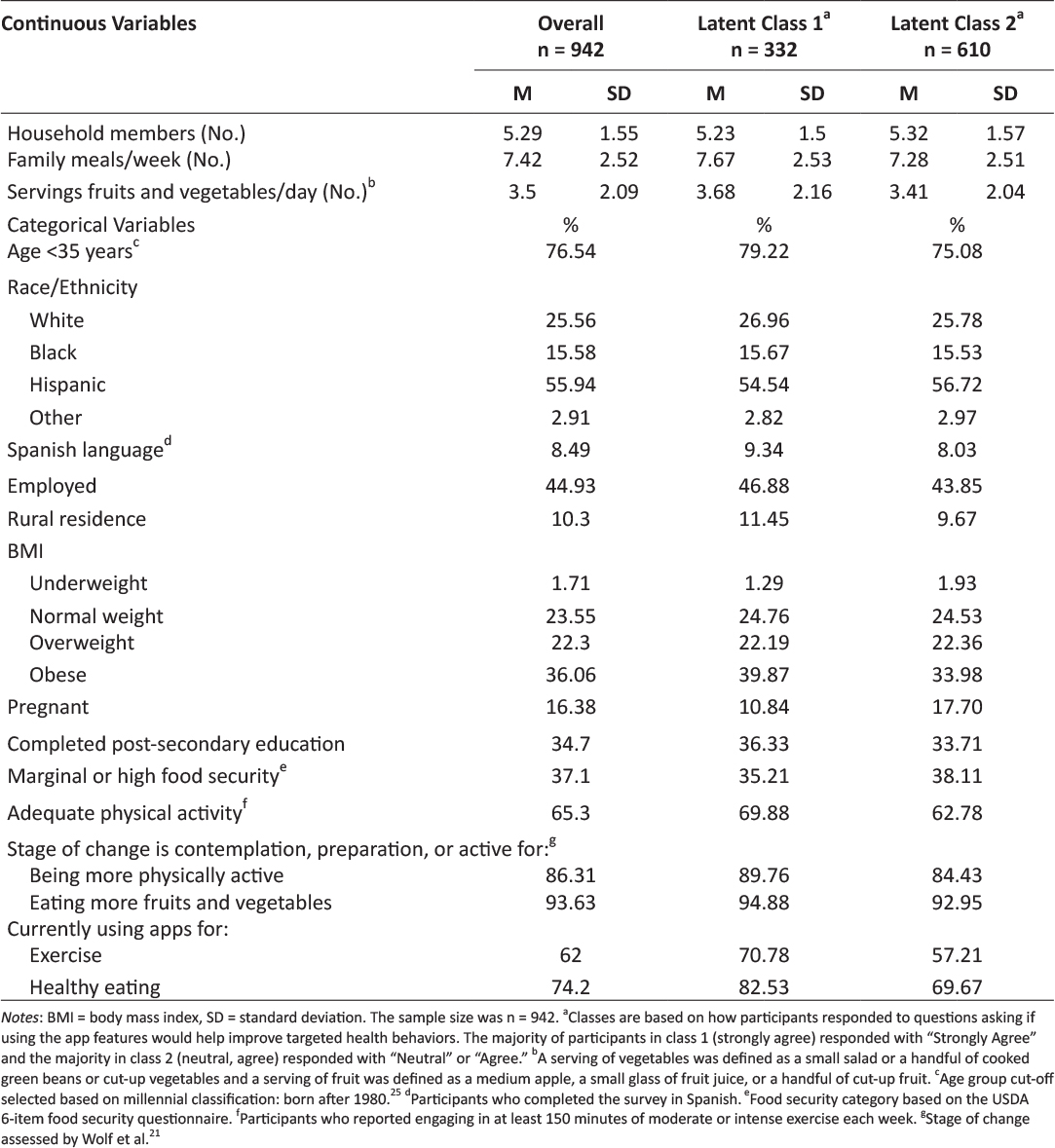

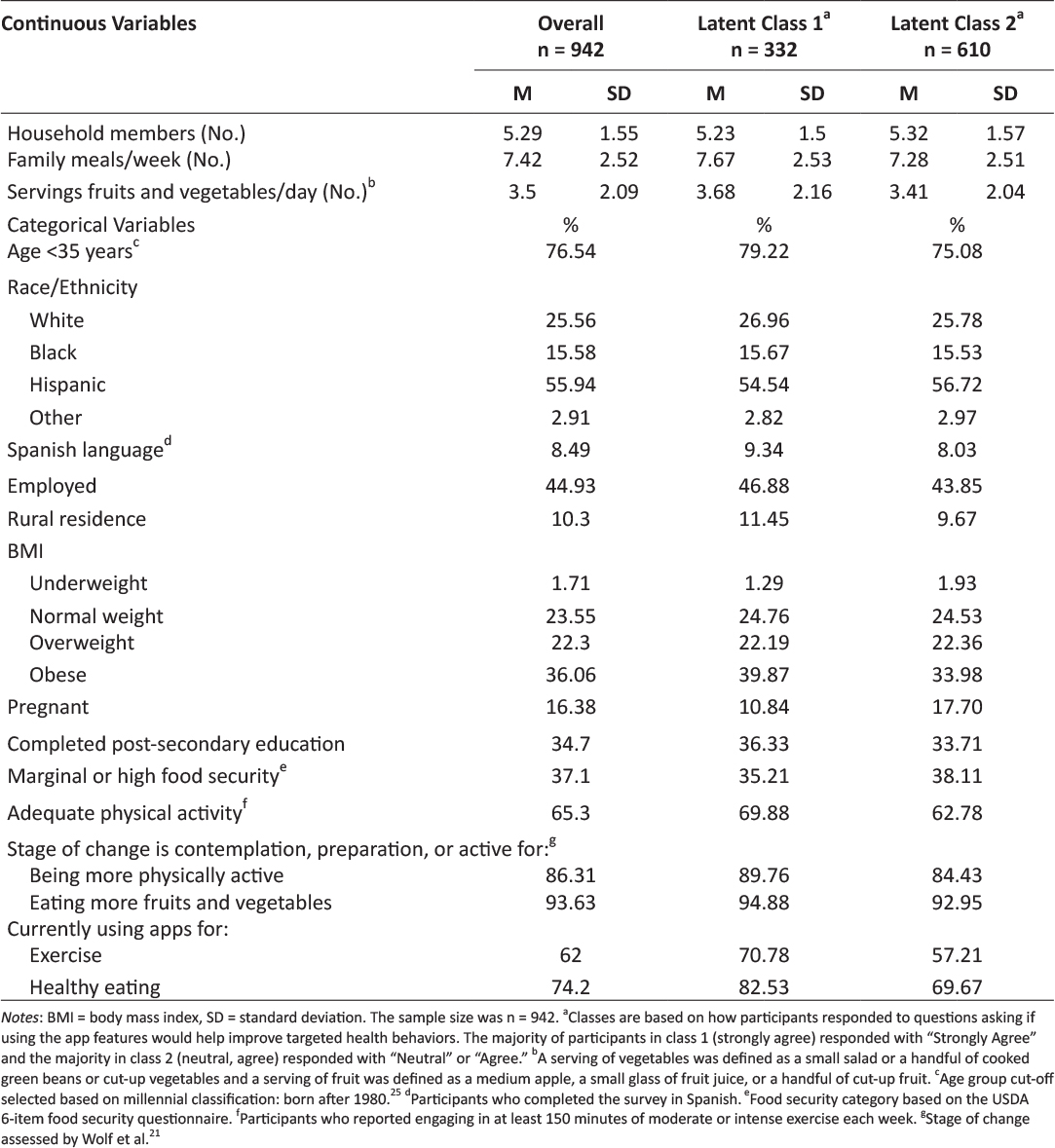

Table 2 includes descriptive statistics for the complete analytic sample as well as by latent class. On average, respondents in the complete sample had approximately 5 household members and consumed 7.4 family meals per week, including 3.5 servings of fruits and vegetables per day. Approximately three out of four respondents were younger than 35 years of age at the time of the survey, which categorizes them as millenials.25 Slightly more than half of participants were Hispanic and the vast majority took the survey in English. Approximately 45% of respondents were employed and the majority were urban-dwellers. Fifty-eight percent were overweight or obese. Sixteen percent of the sample was pregnant. Approximately a third of the sample had completed post-secondary education and a third had marginal or high food security. Two-thirds engaged in at least 150 minutes of moderate or intense physical activity each week. Almost two-thirds of participants used apps for exercise and three-quarters used healthy eating apps. The vast majority recognized the benefits of being physically active and eating fruits and vegetables. Likewise, the majority were in contemplation, preparation, or active stages of change regarding being physically active and eating fruits and vegetables.

Table 2: Description of the overall sample of Texas WIC clients and the 2 latent classes.

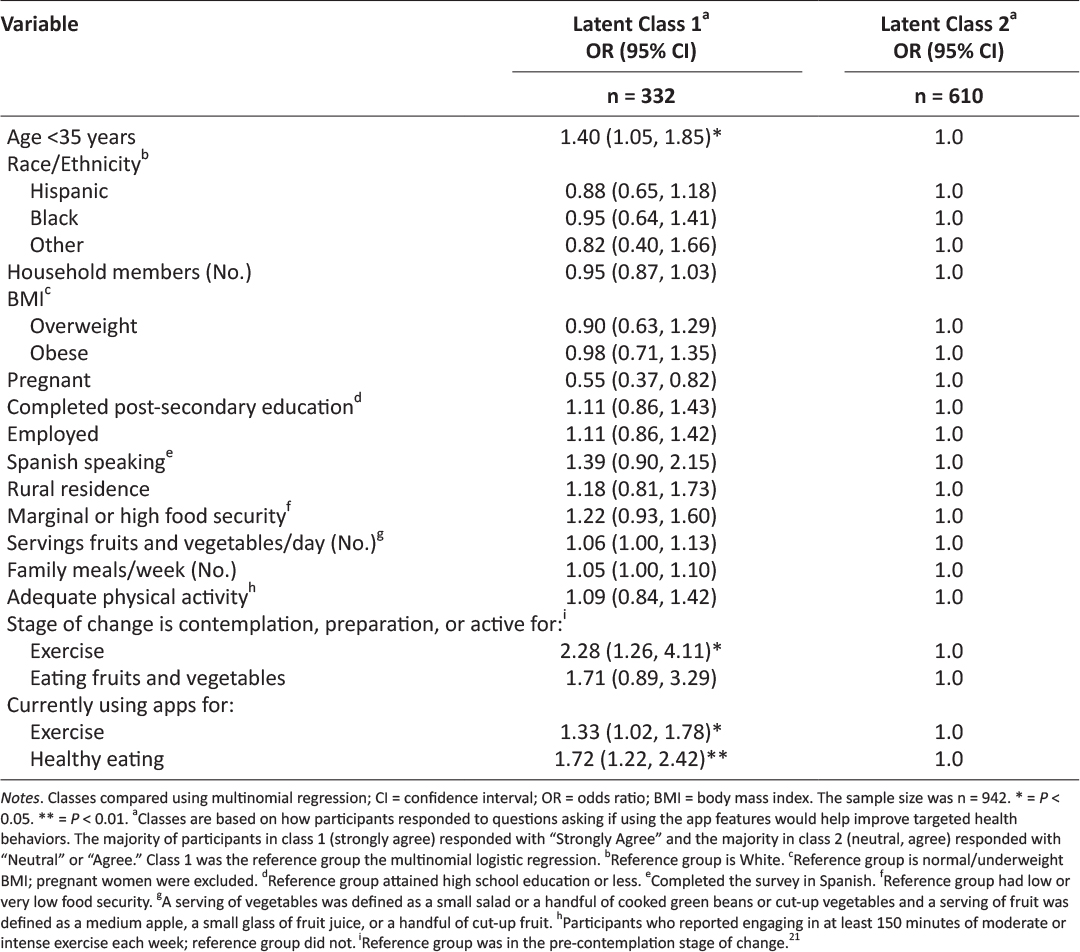

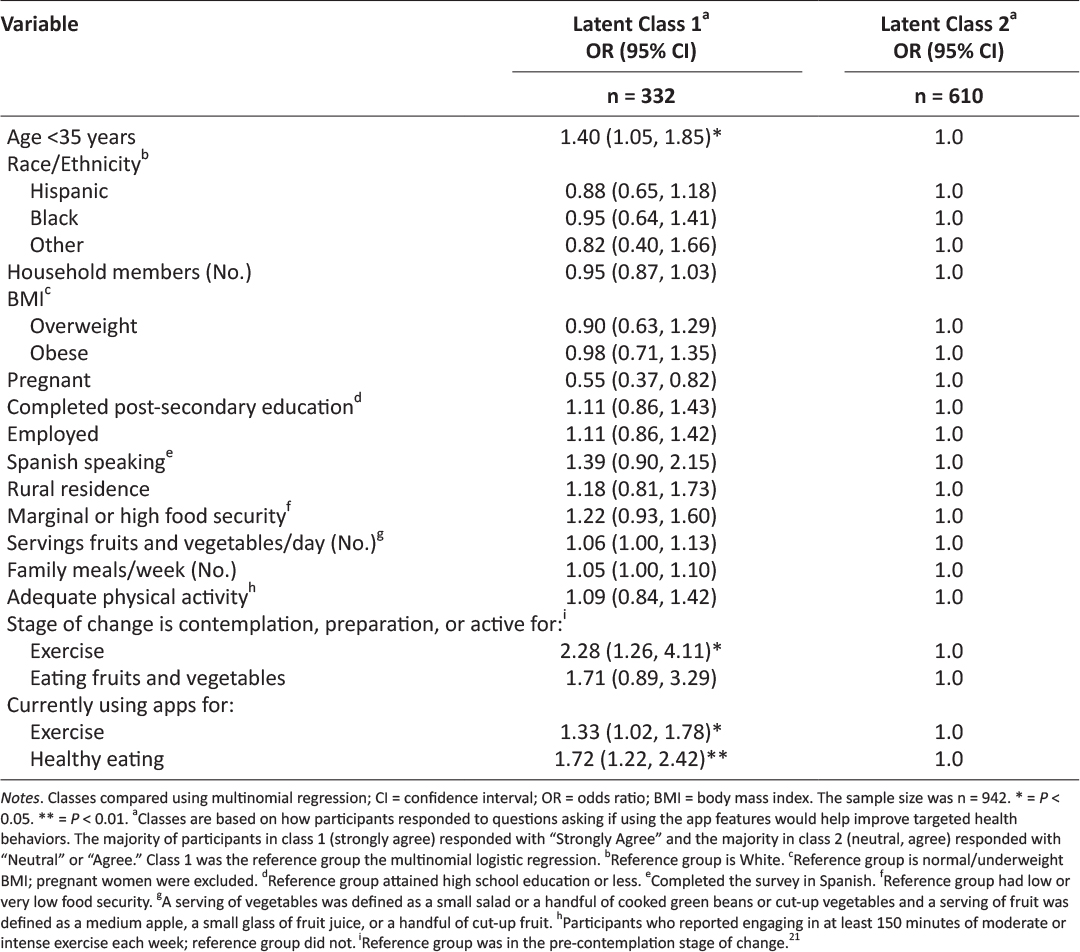

The results of the multinomial logistic regression are shown in Table 3; class 2 (neutral, agree) was used as the reference group. Age, pregnancy, current app use, and stage of change regarding exercise were significant predictors of class membership. Specifically, respondents were more likely to be in class 1 (strongly agree) if they were younger than 35 years old (OR = 1.40; CI = 1.05, 1.86), were in contemplation, preparation, or active stages of change regarding exercise (OR = 2.28, CI = 1.26, 4.11), or were currently using apps for exercise (OR = 1.33; CI = 1.02, 1.78) or healthy eating (OR = 1.72, CI = 1.22, 2.42). Respondents were significantly less likely to be in class 1 (strongly agree) if they were pregnant (OR = 0.55, CI = 0.37, 0.82).

Table 3: Predictors of Texas WIC clients’ perceptions of WIC app prototype features.

Discussion

This paper describes an intermediate stage of UCD of an app designed for Texas WIC participants. By investigating variation in responses to the app prototype features, our aim was to identify characteristics of survey respondents associated with the strength of their agreement that the physical activity and healthy eating features would help them improve targeted health behaviors. Importantly, survey respondents’ reactions to the app prototype features were positive. Participants who strongly agreed that the app features would help support behavior changes were more likely to be younger than 35 years of age, in the contemplation, preparation, or action stages of change for the targeted health behaviors, and currently using apps to foster these health behaviors.

The influence of age on class membership is not unexpected. Indeed, millennials, or those born after 1980, are the age group most likely to be continually engaged with smartphones26 and use them for a variety of activities such as searching for jobs and accessing health information.27 One potential avenue for increasing the acceptability of this app among older WIC participants would be to link to or otherwise leverage platforms that have cross-generational appeal.28 In the context of the transtheoretical model, it is also not surprising that participants in contemplation, preparation, or action stages of change were more likely to view the proposed app features as supportive, as they were already interested in improving the targeted health behaviors.29 Similarly, individuals currently using apps are likely more receptive to prototype app features, in general. A surprising finding was that pregnancy was a significant predictor of class membership, with pregnant women being half as likely to be in class 1 (strongly agree). Previous research has reported that, in general, pregnant women are interested in health-related apps with features that are provide information specific to pregnancy, such as pregnancy-related risk factors, gestational weight gain, diet and lifestyle, postpartum depression, social support, and early infant feeding.30–32 In light of this, one explanation for the relatively tepid response of pregnant women in this study could be that the features were not specific to dietary and exercise recommendations for pregnancy. Additionally, mobile health interventions targeting pregnant women often suffer from low enrollment and high attrition, suggesting that, in general, pregnancy may be a challenging time to address health behavior change.33 However, firm conclusions about the allure of mobile apps to address health behaviors during pregnancy cannot be drawn due to a dearth of relevant studies.33 Given that health behaviors during pregnancy can have a profound impact on maternal and child health, it is important for an app designed for WIC clients to specifically address the needs of pregnant women to support a healthful pregnancy.

A strength of this study was the use of a large sample of Texas WIC clients who have experience with technology. Sample demographics were somewhat comparable to Texas WIC, with 56% of the sample being Hispanic, compared to 68% in Texas WIC,34 and 58% being overweight or obese, compared to 52% in Texas WIC.35 Limitations include electronic recruitment of individuals who owned a smartphone, resulting in a sample biased towards technology use.

Conclusion

Given the enthusiastic response to the Texas WIC app prototype, it would be tempting to finalize app development by simply incorporating the features described in this study. However, while many factors may impact the ultimate success of public health apps, perhaps a central piece revolves around the needs and preferences of the intended user, framed within the context of his or her specific life’s challenges.13 A user-centered approach to developing technology-based tools, such as the UCD process, is a critical step in ensuring that public health interventions reach their target audiences and elicit desired health outcomes.14 Given the health status of vulnerable populations, such as low income women and children participating in WIC,2 developing and implementing efficacious, evidence-based, population-specific tools and technologies that meet the needs of participants is of paramount importance, and may provide a catalyst to improve health equity by removing barriers to accessing information. In the case of the development of a Texas WIC app, engaging clients who are older, in a pre-contemplation stage of change, or pregnant should occur next, so that their specific needs and preferences can be incorporated into the final version. The iterative process of UCD used in this research may serve as a useful framework for development of public health apps.

Acknowledgements

This research was funded by a grant from the Texas Department of State Health Services (contract number 2014-045584). WIC staff provided input on prototype feature design,17 reviewed survey content, and facilitated survey dissemination via their website.

Disclosures

The Institutional Review Boards of Texas State University (2013E3835) and the Texas Department of State Health Services (14-014) approved this study.

Declaration of Competing Interests

All authors have completed the Unified Competing Interest form at www.icmje.org/coi_disclosure.pdf (available on request from the corresponding author) and declare: all authors had financial support from the Texas Department of State Health Services for the submitted work; no financial relationships with any organisations that might have an interest in the submitted work in the previous 3 years; no other relationships or activities that could appear to have influenced the submitted work.

References

1. Legal Momentum. Women and Poverty in America. Available from: https://www.legalmomentum.org/women-and-poverty-america. Accessed December 2016.

2. Adler NE, Stewart J. Health disparities across the lifespan: Meaning, methods, and mechanisms. Ann N Y Acad Sci. 2010;1186:5-23.

3. Eidelman AI, Schanler RJ, Johnston M, et al. Breastfeeding and the use of human milk. Pediatrics. 2012;129(3):e827-e841.

4. Oliveira V, Racine E, Olmsted J, Ghelfi LM. The WIC Program: Background, trends and issues (No. 33847). United States Department of Agriculture, Economic Research Service; 2002.

5. United States Department of Agriculture Food and Nutrition Service. WIC Program: Total Participation. Available from: https://www.fns.usda.gov/sites/default/files/pd/26wifypart.pdf. Accessed July 2017.

6. Special Supplemental Nutrition Program for Women, Infants, and Children. 7 C.F.R. §246.11 1985. Available from: http://www.ecfr.gov/cgi-bin/text-idx?SID=a42889f84f99d56ec18d77c9b463c613&node=7:4.1.1.1.10&rgn=div5#se7.4.246_111. Accessed July 2017.

7. Greenblatt Y, Gomez S, Alleman G, et al. Optimizing nutrition education in WIC: Findings from focus groups with Arizona clients and staff. J Nutr Educ Behav. 2016;48(4):289-294.

8. Cates S, Capogrossi K, Sallack L. WIC Nutrition Education Study: Phase I Report. Alexandria, VA: United States Department of Agriculture, Food and Nutrition Service, Office of Policy Support; 2016. Available from: https://fns-prod.azureedge.net/sites/default/files/ops/WICNutEd-PhaseI.pdf. Accessed June 2017.

9. McHenry G. Evolving technologies change the nature of Internet use. National Telecommunications and Information Administration. Available from: https://www.ntia.doc.gov/blog/2016/evolving-technologies-change-nature-internet-use. Accessed July 2017.

10. Deehy K, Hoger FS, Kallio J, et al. Participant-centered education: Building a new WIC nutrition education model. J Nutr Educ Behav. 2010;42(3S):S39-S46.

11. Schoeppe S, Alley S, Van Lippevelde W, et al. Efficacy of interventions that use apps to improve diet, physical activity and sedentary behaviour: A systematic review. Int J Behav Nutr Phys Act. 2016;13(1):127.

12. Localytics. Helping marketers better understand app user trends. Available from: http://www.localytics.com/resources/app-stickiness-index-q1-2015. Accessed July 2017.

13. Barclay G, Sabina A, Graham G. Population health and technology: Placing people first. Am J Public Health. 2014;104(12):2246-2247.

14. McCurdie T, Taneva S, Casselman M, et al. mHealth Consumer apps: The case for user-centered design. Biomed Instrum Technol Mob Heal. 2012;Suppl:49-56.

15. Vorrink SN, Kort HS, Troosters T, et al. A mobile phone app to stimulate daily physical activity in patients with chronic obstructive pulmonary disease: Development, feasibility, and pilot studies. JMIR mHealth uHealth. 2016;4(1):e11.

16. Texas WIC Nutrition Education Survey – Statewide Report. Texas Department of State Health Services; 2016. Available from: https://www.dshs.texas.gov/wichd/bf/surveysreports.aspx. Accessed July 2017.

17. Biediger-Friedman L, Crixell SH, Silva M, et al. User-centered design of a Texas WIC app: A focus group investigation. Am J Health Behav. 2016;40(4):461-71.

18. Bandura A. Self-efficacy: Toward a unifying theory of behavioral change. Adv Behav Res Ther. 1978;1(4):139-161.

19. Koebnick C, Smith N, Huang K, et al. The prevalence of obesity and obesity-related health conditions in a large multiethnic cohort of young adults in California. Ann Epidemiol. 2012;22(9):609-616.

20. Godin G, Shephard R. Godin leisure-time exercise questionnaire. Med Sci Sport Exerc. 1997;29(6):S36-S38.

21. Wolf RL, Lepore SJ, Vandergrift JL, et al. Knowledge, barriers, and stage of change as correlates of fruit and vegetable consumption among urban and mostly immigrant black men. J Am Diet Assoc. 2008;108(8):1315-1322.

22. United States Department of Agriculture Economic Research Service. Household Food Security Survey Module: Six-Item Short Form. Available from: https://www.ers.usda.gov/media/8282/short2012.pdf. Accessed May 2014.

23. Hagenaars J, McCutcheon A, eds. Applied Latent Class Analysis. Cambridge, UK: Cambridge University Press; 2002.

24. Muthén L, Muthén B. Mplus User’s Guide. Vol 7th ed. Los Angeles, CA: Muthén & Muthén; 2015.

25. Experian. Millennials and Technology: The Natural Order of Things. Available from: http://www.experian.com/blogs/marketing-forward/2014/07/07/millennials-and-technology-the-natural-order-of-things. Accessed July 6, 2017.

26. Nielsen. Millennials are Top Smartphone Users. Available from: http://www.nielsen.com/us/en/insights/news/2016/millennials-are-top-smartphone-users.html. Accessed July 2017.

27. Pew Research Center. U.S. Smartphone Use in 2015. Available from: http://www.pewinternet.org/2015/04/01/us-smartphone-use-in-2015/. Accessed June 2015.

28. Pew Research Center. Social Media Update 2016. Available from: http://www.pewinternet.org/2016/11/11/social-media-update-2016/. Accessed May 2018.

29. Prochaska JO, Velicer WF. The transtheoretical model of health behavior change. Am J Heal Promot. 1997;12(1).

30. Osma J, Barrera AZ, Ramphos E. Are pregnant and postpartum women interested in health-related apps? Implications for the prevention of perinatal depression. Cyberpsychology, Behav Soc Netw. 2016;19(6):412-415.

31. Krishnamurti T, Davis A, Wong-Parodi G, et al. Development and testing of the MyHealthyPregnancy App: A behavioral decision research-based tool for assessing and communicating pregnancy risk. JMIR Mhealth Uhealth. 2017;5(4):e42.

32. Waring ME, Moore Simas TA, Xiao RS, et al. Pregnant women’s interest in a website or mobile application for healthy gestational weight gain. Sex Reprod Healthc. 2014;5(4):182-184.

33. O’Brien CM, Cramp C, Dodd JM. Delivery of dietary and lifestyle interventions in pregnancy: Is it time to promote the use of electronic and mobile health technologies? Semin Reprod Med. 2016;34(2):e22-e27.

34. United States Department of Agriculture Food and Nutrition Services. WIC Racial-Ethnic Group Enrollment Data 2012. Available from: https://www.fns.usda.gov/wic/wic-racial-ethnic-group-enrollment-data-2012. Accessed July 2017.

35. Johnson B, Thorn B, McGill B, et al. WIC Participant and Program Characteristics 2012. Alexandria, VA: United States Department of Agriculture, Food and Nutrition Service; 2013. Available at https://www.fns.usda.gov/wic/women-infants-and-children-wic-participant-and-program-characteristics-2012. Accessed June 2017.

Posted on Dec 4, 2018 in Original Article |

Evaluation of Free Android Healthcare Apps Listed in appsanitarie.it Database: Technical Analysis, Survey Results and Suggestions for Developers

Dr. Lorenzo Di Matteo1, Dr. Carmela Pierri2, M.D. Sergio Pillon3, Eng. Giampiero Gasperini4, Eng. Paolo Preite1, Dr. Edoardo Limone4, Dr. Silvia Rongoni1

1Department of Training, Formit Foundation; 2Board of Directors, UNINT University/ Department of Training, Formit Foundation; 3Department of Cardiovascular Telemedicine, Azienda Ospedaliera San Camillo-Forlanini; 4Department of Strategy and Technologies, Formit Foundation

Corresponding Author: l.dimatteo@formit.org

Journal MTM 7:2:17–26, 2018

doi:10.7309/jmtm.7.2.3

Background: Health apps catalogued in dedicated databases are not scarce but still little is known about the situation concerning their technical aspects such as the general level of privacy and security.

Aims: This study aims to analyze android free health apps in a specific database.

Methods: A systematic technical analysis on a population of 275 android free app among the ones listed in the appsanitarie.it database (“Banca Dati delle app sanitarie”). Analysis has been carried out following a defined protocol with a survey as operative support tool to examine aspects such as the app rating in the store.

Results: The analysis concerned 275 health apps. Cardiology (38 apps) resulted to be the most populous medical branch. The overall app ratings average is 4,10. 18,54% of the apps required personal data at first launch. 84,36% of the apps allowed only manual data entry. Data sharing has been detected in 133 cases. 9,45% of the apps provides a backup option. 13% of the apps declare to be compliant to some kind of privacy regulation. Among this 13% of apps only 19% showed relevance to the EU privacy regulation. The 61,1% of the apps presented no reference for scientific background of the contents.

Conclusions: Manual data entry when redundant should be avoided by developers in favour of automatic calculation of derived parameters. Moreover a limited number of the analyzed apps adopt data protection mechanisms and declare privacy compliance. Security and Privacy are generally poor. Survey results suggest there is large room for improvement in app design.

Keywords: Telemedicine, eHealth, mHealth, Data Security, Baseline Survey

Introduction

Apps on mobile devices such as smartphone offer a lot of perspectives of use in health and medical fields. App economy as the whole range of economic activity related to mobile applications evolve rapidly as the smartphone market. Other studies report that only the first 10 top mobile health apps generate up to 4 million free and 300.000 paid downloads per day1.

On the other side Healthcare researches find that vast majority of professionals is conscious of an interoperability lack for a better use of patient generated data2. Other researches show that more than half of the interviewed patients assert to have used a digital device including mobile apps to manage their health and almost two thirds think it would be helpful for their healthcare providers to have access to their patient generated data as part of their medical history3.

Studies showed that for patients with chronic diseases it is a comfortable solution sharing data with healthcare providers via online patient portal, mobile apps or message texts4. This could lead to some sort of benefits for both patients and healthcare providers but also expose to some risks, especially the first ones5,6. Unclear disclosures about data processing terms could lead to privacy risks for the user and insufficient security could bring to data breaches or loss risks, considering also that a smartphone loss could bring to a leakage7. Security or data protection could be not sufficient if the user is not fully capable to prevent the loss of data from the device or mechanisms as encryption or passwords are not available8.

On the other hand sharing patients health data with messaging and multimedia mobile applications as communication channels it’s handy for a professional but non completely compliant with health data protection standards a healthcare trust certainly adopt9. On the patient side new findings concluded that while less than half of the analyzed apps are useful to the targeted user, some apps seemed to sacrifice quality and safety to add more functionalities10.

The purpose of this study is to make a technical analysis of free android apps listed in a dedicated “healthcare apps” database, “Banca Dati delle app sanitarie” (at http://www.appsanitarie.it/banca-dati-app-sanitarie). The database has been developed as part of a Formit Foundation project financed by a grant of the General Directorate of Medical Devices and Pharmaceutical Service of the Italian Ministry of Health in 2015-2016. Launched in 2015 the database was created to list results of the apps census operated by the Observatory of the health apps established by Formit Foundation.

Apps in the database has been selected through a specific definition, “healthcare apps”, and selection workflow (see methods section for a full description). Database apps, both Android and iOS, have been selected through specific criteria in the stores (summoned in a workflow), and could be used in an healthcare context by patients and physicians. The database apps considered are 659 “healthcare apps”, divided in medical branches and 2% of them present a CE mark as medical device. The database has been chosen as starting point for the selection of listed apps because of a clear definition and a selection workflow.

The study which results will be here presented has not been conducted looking in the inner working mechanisms of the apps but with a highly technical analysis of the functionalities available to users. Analysis has been carried out facing four different groups of app characteristic: the app general details; the features as requested data, data entry, data access, connect-ability, online and sharing feature; password and backup security mechanisms; privacy terms and scientific references. Regulatory framework considered in matter of privacy is the General Data Protection Regulation, GDPR (Regulation EU 2016/679)11 due to its validity all over national member states legislations, and the Privacy Code of Conduct on mHealth apps for what concerns guidelines to enhance privacy in this field12.

Methods

Ethical statement

This research project has been conducted with full compliance of research ethics norms. Research involved usage of mobile devices and apps. Survey development and data gathering involved part of the research team while survey fulfillment another one. Results analysis has been carried out by the whole team.

App selection

Apps has been selected among the list of free Android ones (Google play downloadable) in every medical branch composing the database (Banca Dati App sanitarie, BDA http://www.appsanitarie.it/banca-dati-app-sanitarie). It was also decided to exclude from the analysis apps requiring registration with medical credentials to dedicated platform or specific devices to work. Apps listed in the database has been chosen before this study following a specific definition and a workflow. In this sense not all the health apps could be listed in the database.

Apps defined as healthcare apps (App sanitarie) in the database are:

• CE marked Medical device apps (in order to achieve CE mark for their products in Europe, medical device manufacturers must comply with the appropriate medical device directive set forth by the EU Commission);

• Apps not developed with medical purpose by the producer but responding to one of this characteristics:

– receive data from medical devices;

– elaboration and transformation of healthcare and patient-related data;

– interaction with a non medical device that visualize, memorize, analyze and transmit data;

– receive health data by user with manual entry that are not only diet and fitness oriented.

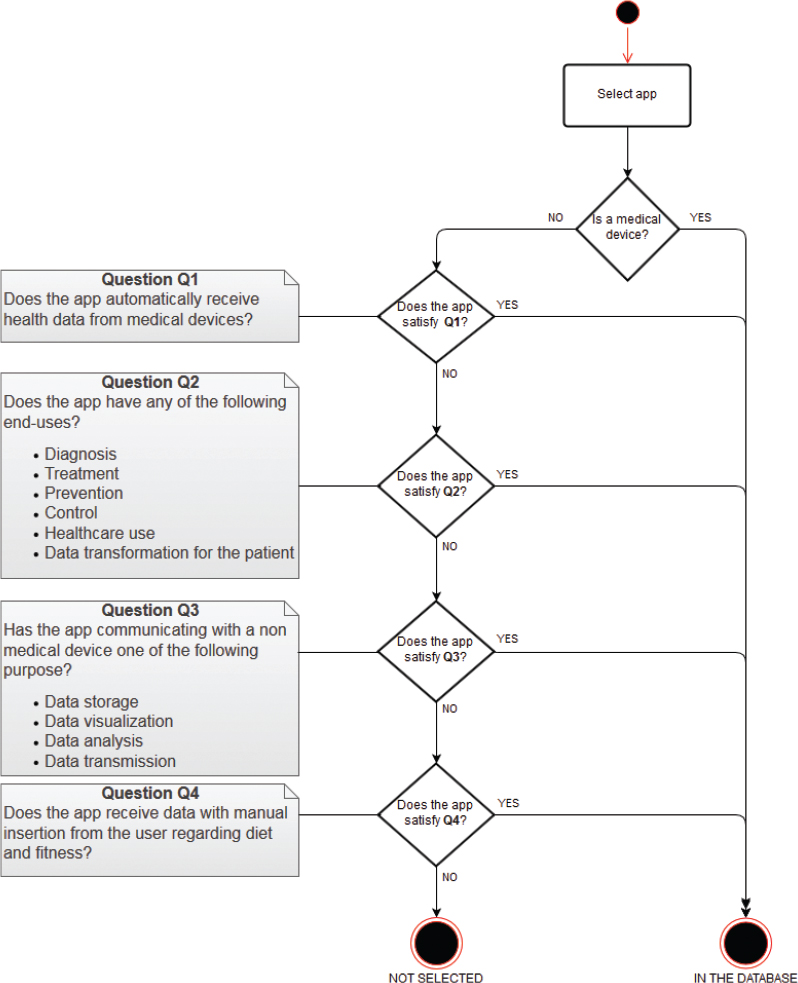

According to the app definition this workflow was used:

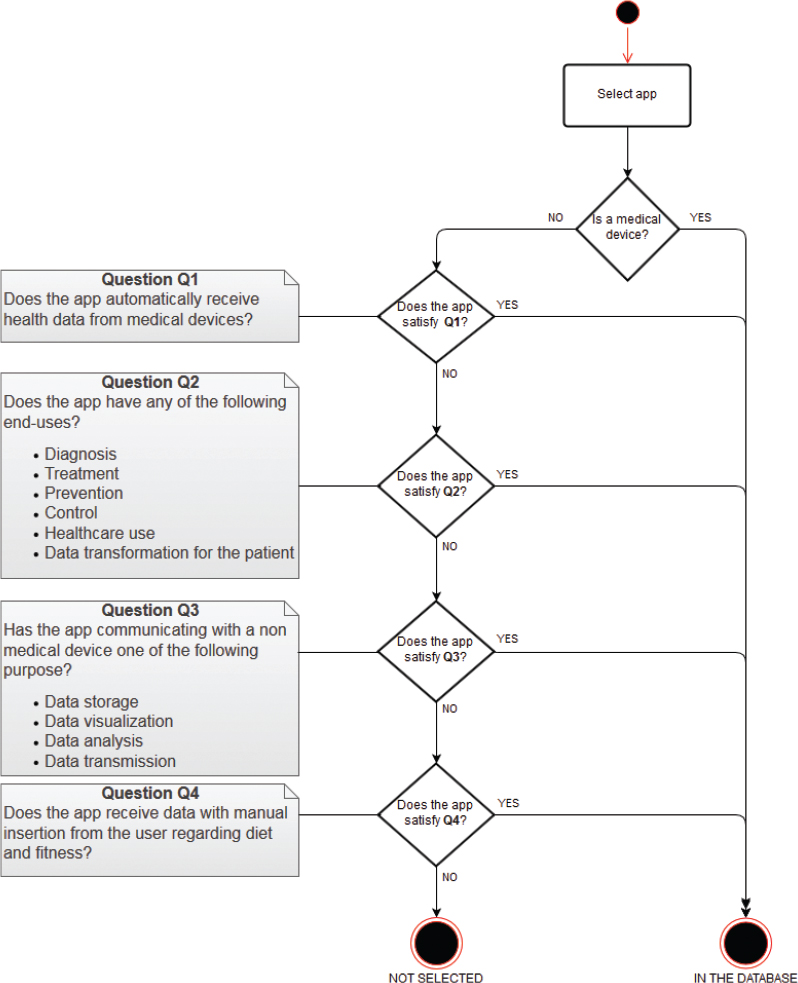

Figure 1: App Selection Criteria

The apps selected from the database to be analyzed satisfy the following operative criteria:

-

– Available for Android (downloadable from Google play);

-

– Free;

-

– With no mandatory registration to platform requiring medical credentials;

-

– Usable independently from connection with external devices.

Technical analysis

Apps have been under a phase of technical analysis for 2 months, from March to April 2017.

The scope of the technical analysis is to examine some of the operating mechanisms of the selected apps. This has been done following a Technical Analysis Scheme characterized by different technical macro-area to identify diverse functional aspects and a metrical-statistical question-answer structure to ensure results measurability and repeatability.

To reach a technical analysis of the software, a survey has been designed and fulfilled. The analysis has been conceived to focus on the following elements:

- Information useful to identify the app;

- Operating characteristics of the app;

- Security related to password, back-up and data encryption;

- Presence of privacy and condition terms.

The technical analysis has been composed by the survey development, comprehensive of design and deployment, and a consequent phase of app analysis, then data gathering and results analysis.

Survey development

To analyze selected apps a survey has been designed with different sections related to different type of data to collect about the four analytics aspects and organized following an answer-question structure. In this sense the sections which composed the survey are:

- App general characteristics, as name, version, developer name, rating on the store;

- App features, as requested personal data, modality of data entry, possibility to delete/change data, connect-ability, online platform registration, sharing on social media;

- App security, as password registration, password recovery, password security level, back-up possibility, backup destination, backup encryption;

- App privacy and reliability, as declaration of compliance to some privacy regulation, European privacy regulation compliance, scientific source or bibliography.

The online survey has been realized with the open source application Lime Survey.

App analysis

Technical-functional analysis has been performed accessing the survey through authentication via username and password. Mobile devices with Android operative system have been used with the newest version of operative system available at the time. App search has been performed on the Google Play store. Download has followed if for free and if available in the country where the study took place (Italy). Once installation terminated, mandatory healthcare professional-only platform registration and necessary external device connection has been checked. If negative, the app has been analyzed through the survey fulfillment.

Data gathering and Results analysis

Through Lime survey data gathering has furnished the overall amount of data from the technical analysis. Results analysis instead has been realized on a compiled single dataset, using descriptive statistics to summarize and underline aspects of data collection. Collected data analysis has been accomplished through the data stored in a database managed directly by the application Lime survey, this allowed to export information in formats suitable for statistical work purposes.

Results

App general characteristics

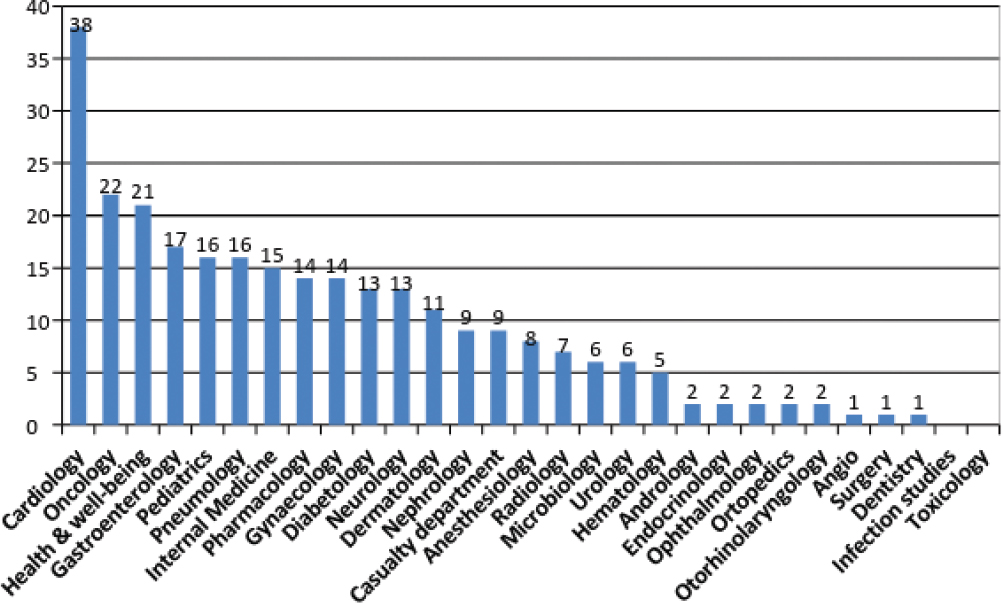

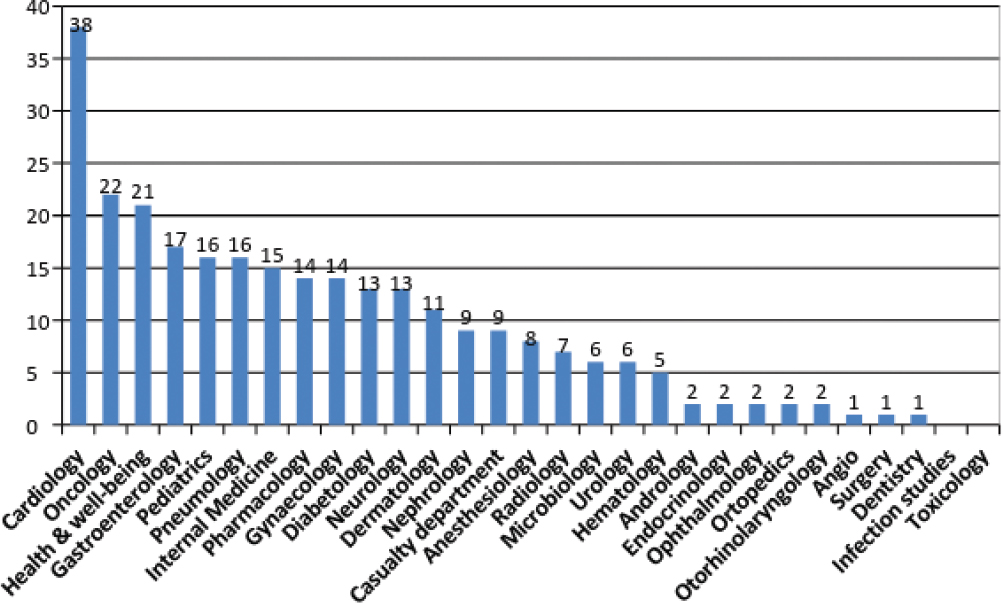

The analysis concerned 275 apps on the total amount of 659 in the database at the time the study took place, due to the existence of operative criteria described in the methods section. Most populous medical branch resulted cardiology (38 apps), oncology (22) and health & well-being (21), as shown in Figure 2.

Figure 2: Number of apps per medical branch

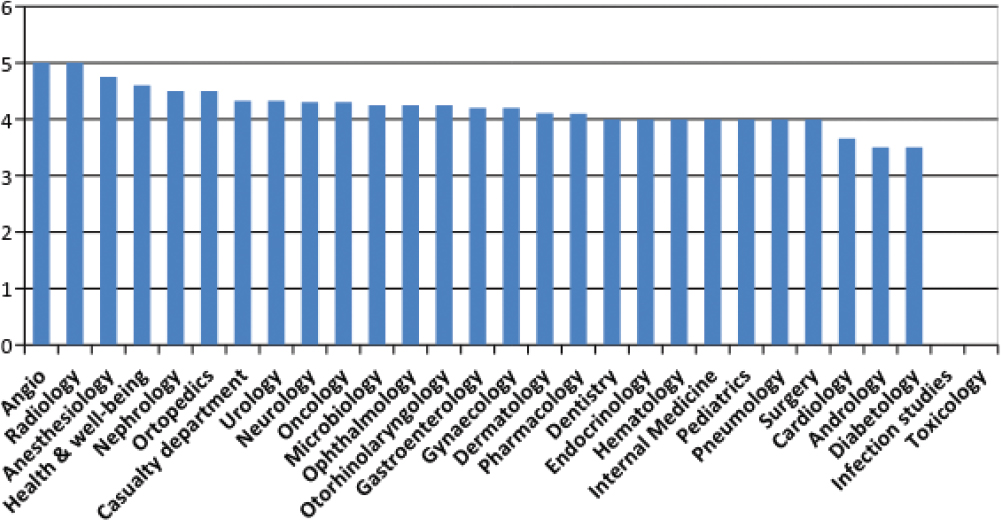

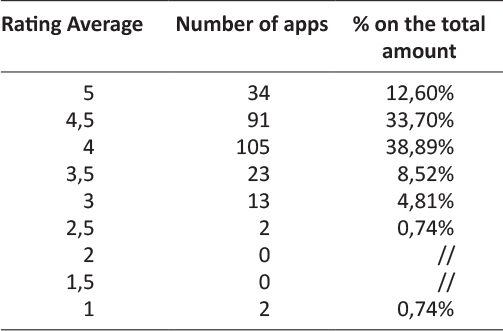

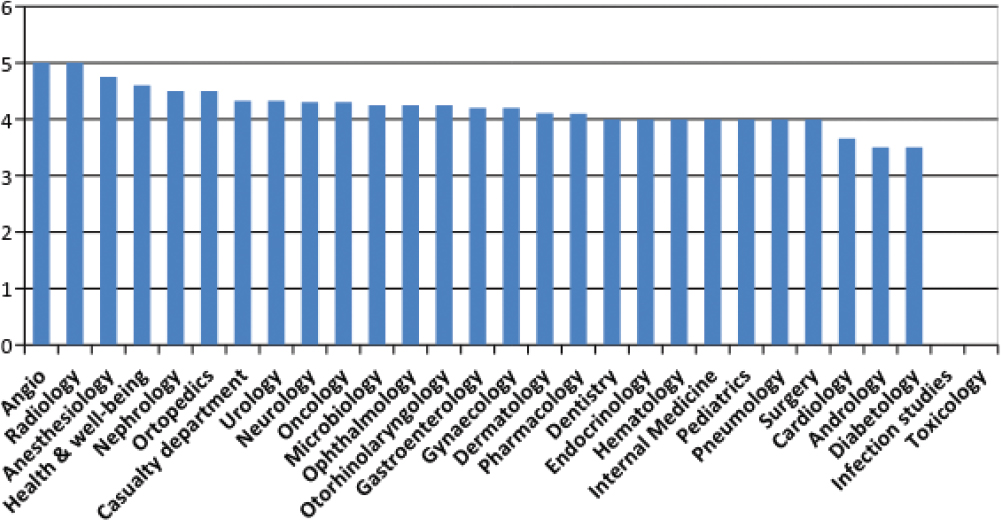

App rating in the store is expressed on a Likert scale from 1 to 5 by the user and shows the average of the overall amount of rating for an app on an incremental scale. Rating average of an app has been rounded down due to simplify data collection management. The average of the overall app rating averages resulted 4,10, where the lowest app rating average is 1 and the higher is 5. In the most populous medical branches, average of the app rating averages in cardiology is 3,66, while in oncology is 4,33 and in health & well-being is 4,60.

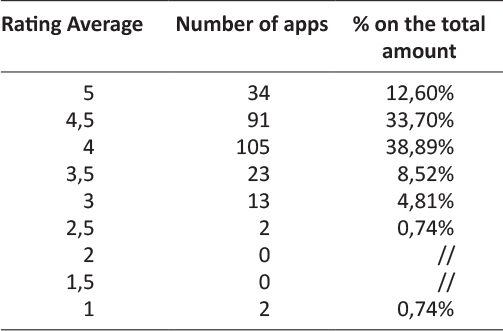

As Figure 3 shows average app rating in the store is high almost for every medical branch in line with the data of an average of the app rating averages of 4,10 on the Likert scale. In fact most of the analyzed apps resulted to be placed in the high ranks of the rating scale. Excluding 5 apps with no rating on the store, 105 on 270 apps, the 38,89% of the overall rated apps resulted having a rating average of 4 while 91 apps, the 33,70% is ranked with an average of 4,5 and 34 apps, the 12,60% showed a rating average of 5. However rating in the store could be subjected to distortive mechanisms such as comments directly or indirectly linked to the developers or an exiguous amount of them.

Figure 3: Average app rating per medical branch

Table 1: Rating average per number of apps

App features

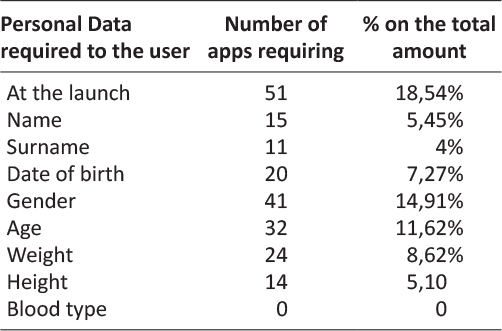

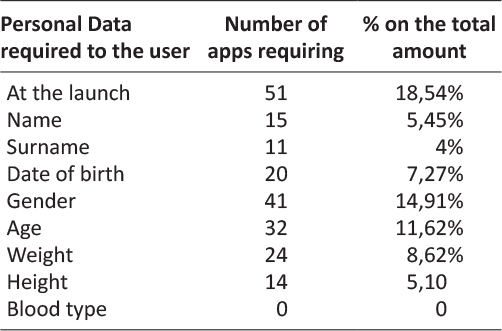

Generally health-ish apps need data input to perform one or more of their features. In this sense 18,54% of the analyzed apps showed to require personal data at their first launch in order to create a user profile. An app can request to the user one or more of the data listed in Table 2. The most frequently required data resulted to be gender for the 14,91% of the apps (41), followed by age the 11,62% (32) and weight the 8,62% (24).

Table 2: Personal Data required at first launch per number of apps

Table 3: Modality of data entry per number of apps

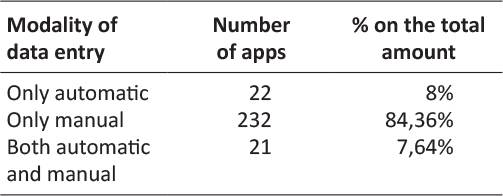

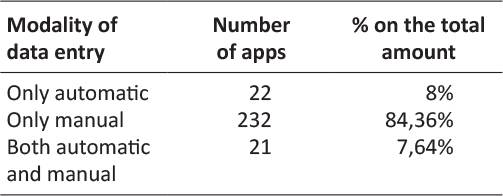

Modality of data entry followed the part of the survey section concerning personal data request. Data entry could happen through a possible synchronization with an external device in order to acquire data automatically, or at the contrary only manually or both. The great majority of the apps allowed only manual data entry, exactly 84,36% (232) of the apps. Only automatic and both data entry modality are allowed by the 8% and the 7,64% of the apps.

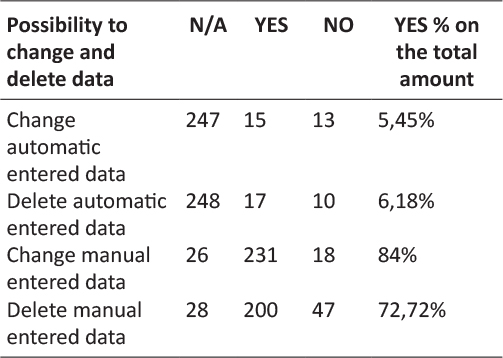

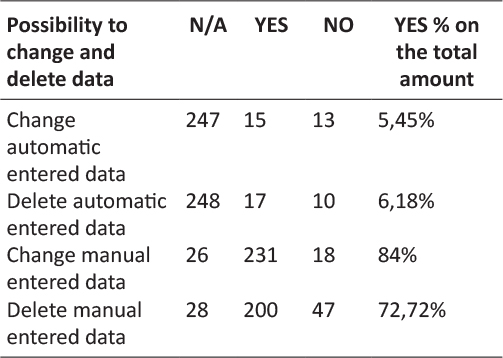

Similarly results about possibility to change and delete entered data showed that it was possible manual change for the 84% of the apps and manual deletion for the 72,72%. It has been noticed that it was not possible change manual entered data only for 18 apps and no possibility to delete for 47. Only 5,45% of the analyzed apps resulted to allow the modification of automatic entered data and 6,18% the deletion. In this sense results of N/A change and delete of automatic entered data and the possibility to change and delete manual entered data or vice versa almost match.

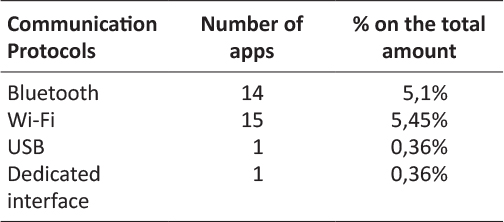

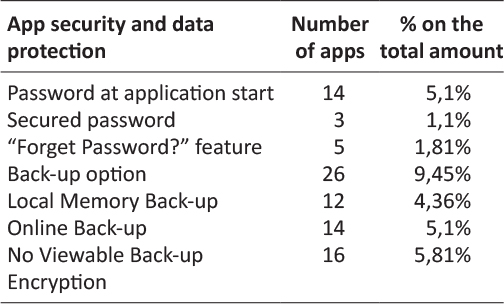

Table 4: Communication protocols per number of apps

Table 5: Communication protocols per number of apps

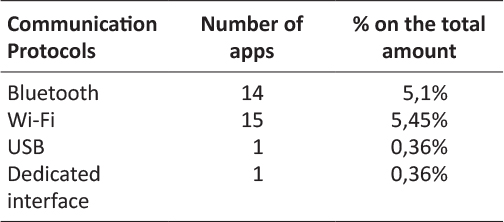

In line with the previous survey results, regarding communication protocols to exchange data with other systems or external medical devices, the most used resulted Wi-Fi for the 5,45% of the apps, followed by Bluetooth for the 5,1%. USB and a dedicated interface resulted to be used as communication protocols only by two of the analyzed apps.

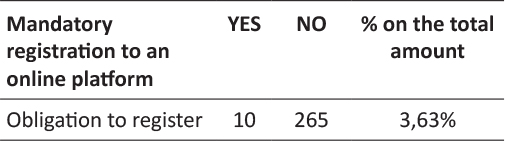

In relation to communication and data exchange with online data storage services it was analyzed diffusion of mandatory registration to online platform in order to completely use the app. Generally apps requiring registration to an online platform permit backup of the data composing the user profile through a dedicated feature. In this sense it turned out to be only a 3,63% of the apps to require a mandatory registration to an online platform.

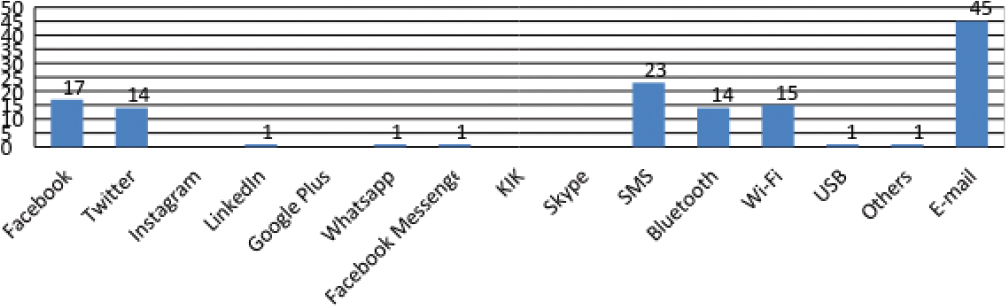

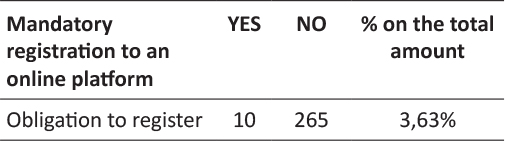

A considerable number of analyzed apps presented the feature “share” on different communication channels and social media. An app can allow more than one data sharing possibility. On the overall 133 times data sharing has been detected, the most frequent data sharing feature resulted to be e-mail (45 apps), almost doubling the second one that is SMS (23). Sharing on social media resulted to be possible only with 17 apps on Facebook and 14 on Twitter. Other channels not considered initially in the survey but of which it has been taken note in dedicated blank spaces, were hangouts resulting 12 times as social media and 10 times google drive as other sharing channel.

Table 6: Mandatory registration to online platforms per number of apps

App security

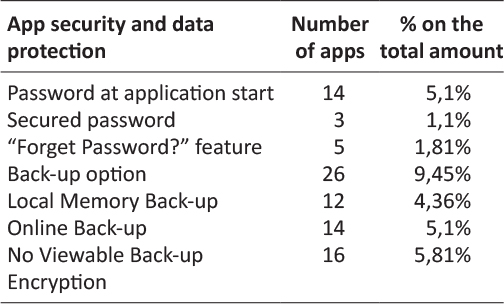

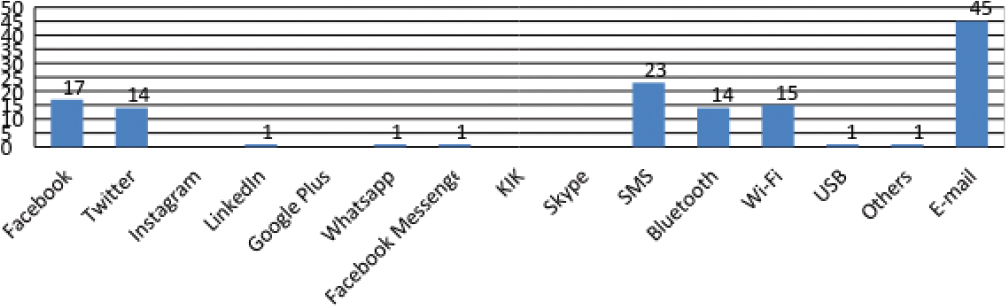

Results regarding app security and data protection showed that only few of the analyzed apps provides password registration. The 5,1% of the apps shown to provide the creation of a password at the app start and only the 1.1% a secured password. On the overall amount of apps only 1,81% provides the password recovery generally known as “Forget Password?” button sending the new password to a previously saved email address.

For what concerns data storage option it resulted to be possible both locally, on the smartphone memory, that remotely with online storage services. Globally 9,45% of the overall analyzed apps provides a backup option. An online backup has been possible for the 5,1% of the apps, while a local memory back-up for the 4,36%. Regarding a clear-to-the-user encryption of the backup, 5,81% of the overall apps, more or less the all apps with backup option, showed no possibility to have clear information about encryption. Naturally it has been not feasible to check for encryption for the vast majority of the apps (93,81%) having no back-up.

Figure 4: Data sharing channels per number of apps

Table 7: App security and data protection

App privacy and reliability

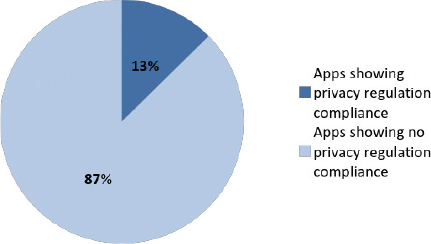

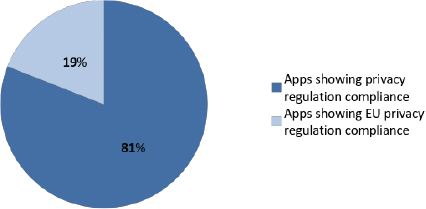

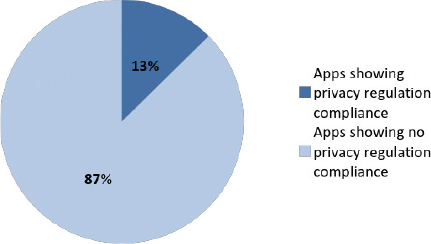

App analysis concerning privacy showed that only 13% of the all apps declare to be compliant to any kind of privacy regulation for what concerns personal and health data about the user. The 87% of the apps showed no declaration of compliance to any kind of privacy regulation with any kind of message to the user, nor at launch nor in the menu.

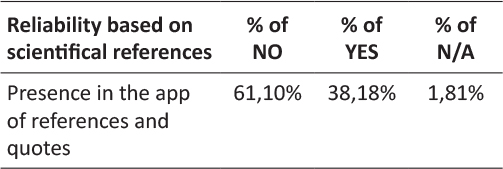

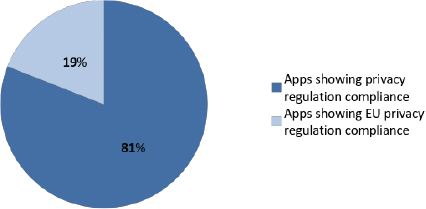

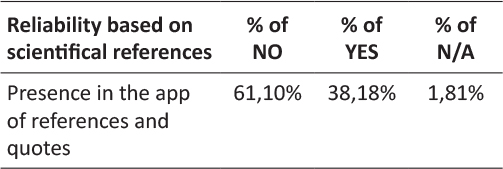

Among this 13% of apps showing a declaration of privacy regulation compliance only 19% showed some sort of relevance to the EU privacy regulation. This is due to the fact that national regulation of Member States has been considered in relation to a wider European privacy regulation. In fact before General Data Protection Regulation, Directive 95/46/CE has been adopted by data protection and privacy national acts. The 81% of the remaining apps showed instead an international declaration related to an End-User License Agreement (EULA) model or some other type of generic declaration. For what concerns reliability it has been considered the presence of references quoted in the app regarding scientific sources. Generally scientific references and quotes has been found in the info or in the bibliography section of the app menu. The 61,1% of the analyzed apps presented no reference or quote regarding the scientific background of the contents, while 38,18% presented a bibliography or quoted studies in a dedicated part of the menu and 1,81% resulted to be not applicable to this check.

Figure 5: Percentage of Apps Showing Declaration of Privacy Regulation Compliance

Figure 6: Percentage of apps showing EU privacy regulation Compliance among apps declaring privacy regulation compliance

Table 8: Scientific references in the app

Discussion

It is expected that Bring-Your-Own-Device connectivity will be preferred by select patient groups and will be used for the remote monitoring of 22.9 million patients in 202113. In this sense it is no surprise the number of health apps in the stores like Google Play, although the number of apps wears thin using a database based on a specific definition of mHealth app with a clearly defined selection workflow. Another boundary for the analysis has been represented by the possibility of free download and analyze functionalities without restrictions of use by necessary external device to operate or mandatory registration to platform requiring medical credentials.

On the other hand the strict database selection criteria and subsequently the limits of analysis operative criteria have brought to a homogeneity of apps population and uniformity of characteristics to analyze. Concerning the definition of app on which the database is based certainly the app analysis selection has been made among a population of apps that excludes low quality apps from the analysis spectrum. In this sense it has to be explained the small numbers of analyzed apps for some specialized medical branches and limits of findings for apps in those branches.

Likert scale is an important score to observe regarding adoption of an app by users. Although this data could be subjected to some distortions and elaboration of new assessing tools doesn’t miss14–18. Usability is generally measured with the perceived ease and enjoyment experiencing in using the app19, but the user could rate poorly an application with solid data security mechanisms but no catchy layout and the opposite with a more attractive but less secure app. Therefore rating of the apps is certainly important albeit partial.

The request of personal data opens up other considerations. Processing personal data would suggest the adoption of a database encryption mechanism, but once “compiled” in the form of “application package” can no longer be opened and verified without violating the copyright of the developer. It is also true that both Apple and Google have enabled encryption of the application database in the default mode.

It should also be noted that requiring to the user both age and date of birth impacts on data quality management. Most of the apps allow only manual data entry. It is an important factor due to its possible repercussion on data quality. Essentially more it is reduced input error, more data quality will be achieved. However data entry could be manual due to a developer lack or to a functional condition to respect in order to let the app run.

Regarding automatic data entry some applications use communication protocols with other systems or external devices as Bluetooth, wi-fi or USB. Regarding data exchange, Android allows the developer to easily implement communication to social networks or instant messaging systems with the possibility of data sharing with other users. Mail choice to share data is no surprise, the versatility of the medium is certainly more suitable to send ordinary messages to a doctor. Similarly SMSs are used by apps to share data since many applications elaborate a set of numeric values that can be easily included into a text message.

In matter of data protection the use of a secure password with a minimum of eight letters and alpha-numeric symbols seems to be rare. In this sense a personal identification number of five digits can be easily forced by a brute force attack trying all the possible combinations. It’s worth to mention also that a strong password is a condition that could be set up by design.

The apps including a backup function resulted to be limited too, but when the app not requires “persistent” data to trace, backup is useless. More than half of the analyzed apps has backup on online services (a cost to the app provider that local memory backup isn’t). The user could be not adequately aware of the technical and legal mechanisms that regulate the cloud computing service20, local backup instead allows complete data management. No sufficient information about data protection controls has been noticed. This is because the focus in the app description on the store generally seems on advertising, while neglecting privacy and security reliability.

The great majority of the apps showed no declaration of compliance to any kind of privacy regulation. Calculator apps or similar apps resets at every exit deleting all the entered data with no real data processing. Anyway it doesn’t really explain the absence of scientific references and quotes in almost 6 on 10 apps. In fact only a few apps presented clear scientific references. For some apps, especially scale calculator a professional may not need scientific references to identify or use a well-known tool in his or her medical branch, but for a user with no particular knowledge in medical science the lack of information could lead to misreading the outcome and to false negative self-diagnosis.

Conclusions

Considering that the analysis has been carried out on a limited number of apps, data-quality oriented approach should be used anyway by developers in order to realize a correct balance between manual data entry and automatic calculation. Manual data-entry should be reduced and the automation should be increased. Moreover format-control parameters or different controls (as sliders, or date-picker) should be used to reduce data-entry mistakes. Replacement of the classic text-field produces an increasing of speed during the filling process and reduces typing errors. In this sense could be useful to look for a better understanding of the perceived and desired usability by the user, rising research attention on this side. On the other hand it is necessary to examine feasibility of mHealth in the healthcare context, as effectiveness of mobile phone applications in healthcare services. In this sense a pathway could be an observational studies by experimenters with patient or physicians adopting mHealth solutions.

Acknowledgements

We would like to thank the General Directorate for Medical Devices and the Pharmaceutical Service of the Ministry of Health (Italy), especially Dir. Marcella Marletta, Eng. Pietro Calamea and Dr. Paola D’Alessandro for their support.

References

1. Mobile Health Market Report 2013-2017, research2guidance, March 2013:7.

2. Compton-Phillips A, What Data Can Really Do for Health Care, Care Redesign, Nejm Catalyst, March 2017:6-7.

3. Patient Expectations of Medical Information Sharing & Personalized Healthcare, Trascend Insights, Survey Report, February 2017.

4. The 2017 Patient Engagement Perspectives Study, CDW Healthcare, 2017.

5. Mitigate Cyberattacks with HIPAA-Compliant Communications, HIPAA White Paper, 2017.

6. Mobile Malware Evolution 2016, Kaspersky Lab, March 2016.

7. “What A Difference A Year Makes”, Healthcare Breach Report, Bitglass, January 2016.

8. “Is Your Data Security Due For a Physical?”, Healthcare Breach Report, Bitglass, January 2015.

9. DeepMind Health Indipendent Review Panel Annual Report, July 2017:14.

10. K. Singh, K. Drouin, L. P. Newmark et al., Developing a Framework for Evaluating the Patient Engagement, Quality, and Safety of Mobile Health Applications, The Commonwealth Fund, February 2016.

11. Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (referred also as General Data Protection Regulation) http://eur-lex.europa.eu/legal-content/GA/TXT/?uri=uriserv:OJ.L_.2016.119.01.0001.01.ENG.

12. Privacy Code of Conduct on mHealth apps, https://ec.europa.eu/digital-single-market/en/privacy-code-conduct-mobile-health-apps.

13. mHealth and Home Monitoring – 8th Edition, Berg Insight, February 2017.

14. Stoyanov, Stoyan R et al. “Mobile App Rating Scale: A New Tool for Assessing the Quality of Health Mobile Apps.” Ed. Gunther Eysenbach. JMIR mHealth and uHealth 3.1 (2015): e27. PMC. Web, 26 July 2017.

15. Stoyanov, Stoyan R et al. “Development and Validation of the User Version of the Mobile Application Rating Scale (uMARS).” Ed. Gunther Eysenbach. JMIR mHealth and uHealth 4.2 (2016): e72. PMC. Web. 27 July 2017.

16. Domnich, Alexander et al. “Development and Validation of the Italian Version of the Mobile Application Rating Scale and Its Generalisability to Apps Targeting Primary Prevention.” BMC Medical Informatics and Decision Making 16 (2016): 83. PMC. Web. 27 July 2017.

17. Bradway, Meghan et al. “mHealth Assessment: Conceptualization of a Global Framework.” Ed. Mircea Focsa. JMIR mHealth and uHealth 5.5 (2017): e60. PMC. Web. 27 July 2017.

18. Baptista, Shaira, Brian Oldenburg, and Adrienne O’Neil. “Response to ‘Development and Validation of the User Version of the Mobile Application Rating Scale (uMARS).’” Ed. Gunther Eysenbach. JMIR mHealth and uHealth 5.6 (2017): e16. PMC. Web. 27 July 2017.

19. J. Nielsen, Usability 101: Introduction to Usability, January 4, 2012, https://www.nngroup.com/articles/usability-101-introduction-to-usability/.

20. Griebel, Lena et al. “A Scoping Review of Cloud Computing in Healthcare.” BMC Medical Informatics and Decision Making 15 (2015): 17. PMC. Web. 27 July 2017: 13.

Posted on Dec 4, 2018 in Original Article |

Use of Personal Devices in Healthcare: Guidelines From A Roundtable Discussion

Laura Vearrier, MD1, Kyle Rosenberger, M.Ed2, Valerie Weber, DMD, MA3

1Assistant clinical professor, Department of Emergency Medicine, Drexel University College of Medicine, Philadelphia, PA; 2Instructional Designer, Ohio University Heritage College of Osteopathic Medicine and Ohio University’s Office of Instructional Innovation, Athens, OH; 3Assistant clinical professor, Department of General Dentistry and Oral Medicine, University of Louisville School of Dentistry, Louisville, KY.

Note: The corresponding author is not a recipient of a research scholarship.

Journal MTM 7:2:27–34, 2018

doi:10.7309/jmtm.7.2.4

Background: In recent years, smartphone use in professional settings has been increasing, particularly with physicians. There are benefits and drawbacks that result from this increase. Despite this, there is relatively limited peer-reviewed medical literature on the subject. Thus, suitable guidelines for smartphone use in the health care setting is needed.

Aims: This article present guidelines for professional conduct related to the use of personal devices, such as smartphones, in the healthcare setting.

Methods: These guidelines were developed through an interdisciplinary roundtable discussion at the 2016 Academy for Professionalism in Health Care Conference in Philadelphia, PA.

Results: As a result of the roundtable discussions, several guidelines were developed. First, healthcare providers should be trained on the danger of distractions caused by personal devices and how to minimize them in a clinical setting. Second, the use of smartphones for personal use should be limited to specified use areas; however, if they are present during a patient encounter, they should be set to a mode that eliminates or minimizes interruptions. Third, providers should seek permission from patients prior to integrating smartphones into the provider-patient relationship. Finally, smartphone photography, while being a potential tool to improve patient care, should be used with caution concerning patient autonomy and privacy.

Conclusion: The guidelines serve as a foundation from which professionalism with regard to personal device use can be further developed.

Keywords: Professionalism, Smartphone, Physicians, Photography, Delivery of Health Care, Clinical Practice, Telemedicine

Introduction

In the last few years, the use of personal devices such as smartphones has been rapidly increasing and smartphone ownership is highest among young adults of higher income and education level.1–2 This trend is being mirrored in the healthcare setting. Nearly all physicians and nurses own smartphones.3–4 Physicians’ usage of smartphones for professional purposes has been steadily increasing from 68% in 2012 to 84% in 2015.5 The Boston Consulting Group and Telenor Group remarked that the “smartphone is the most popular technology among doctors since the stethoscope”.6 A survey study of nurses reported that more than half of nurses have used their smartphone instead of asking a colleague for information.4

There are benefits and drawbacks of providers utilizing their smartphones in the healthcare setting for personal and professional purposes. With computing power and Internet connectivity, personal devices give providers access to textbooks, journal articles, practice guidelines, clinical calculators, and medical applications. Smartphones are improving the efficiency and accuracy of communication. Physicians and nurses are using short messaging services (SMS) to communicate patient information and smartphones have been reported to increase the connectedness of medical trainees’ with their supervisors.7–9 Smartphones are also improving communication between providers and patients. The use of videos on personal devices has been reported to be an efficient and effective way to educate patients on their disease that resulted in increased medication compliance and physicians are using smartphones to monitor patients remotely.10,11 Drawbacks of such constant connectivity include a risk for distraction from patient care. Providers may be interrupted for less acute clinical issues in addition to personal calls, texts, emails, social media, and applications. Personal devices also create a physical barrier between the user and the rest of the world. This barrier translates into cognitive and psychological barriers, and patients are often unaware of the clinical benefits of smartphones.12,13

Despite the ubiquity of smartphones in healthcare, there is limited peer-reviewed medical literature on issues with respect to the professionalism of smartphone use in the healthcare setting. An Ovid Medline keyword search of “professionalism” and the intersection of any of the following: “smartphone”, “smart phone”, “cell phone”, “mobile phone”, “tablet” or “personal device”, yielded only seven results (search performed May, 2016). There is a need for guidance and education regarding professional conduct and personal device use in the healthcare setting. In a survey study of medical students, the majority reported insufficient education from either their medical school curriculum or their senior residents or attendings on appropriate/inappropriate use of mobile devices to communicate patient information and how to conduct themselves professionally with mobile technology.14

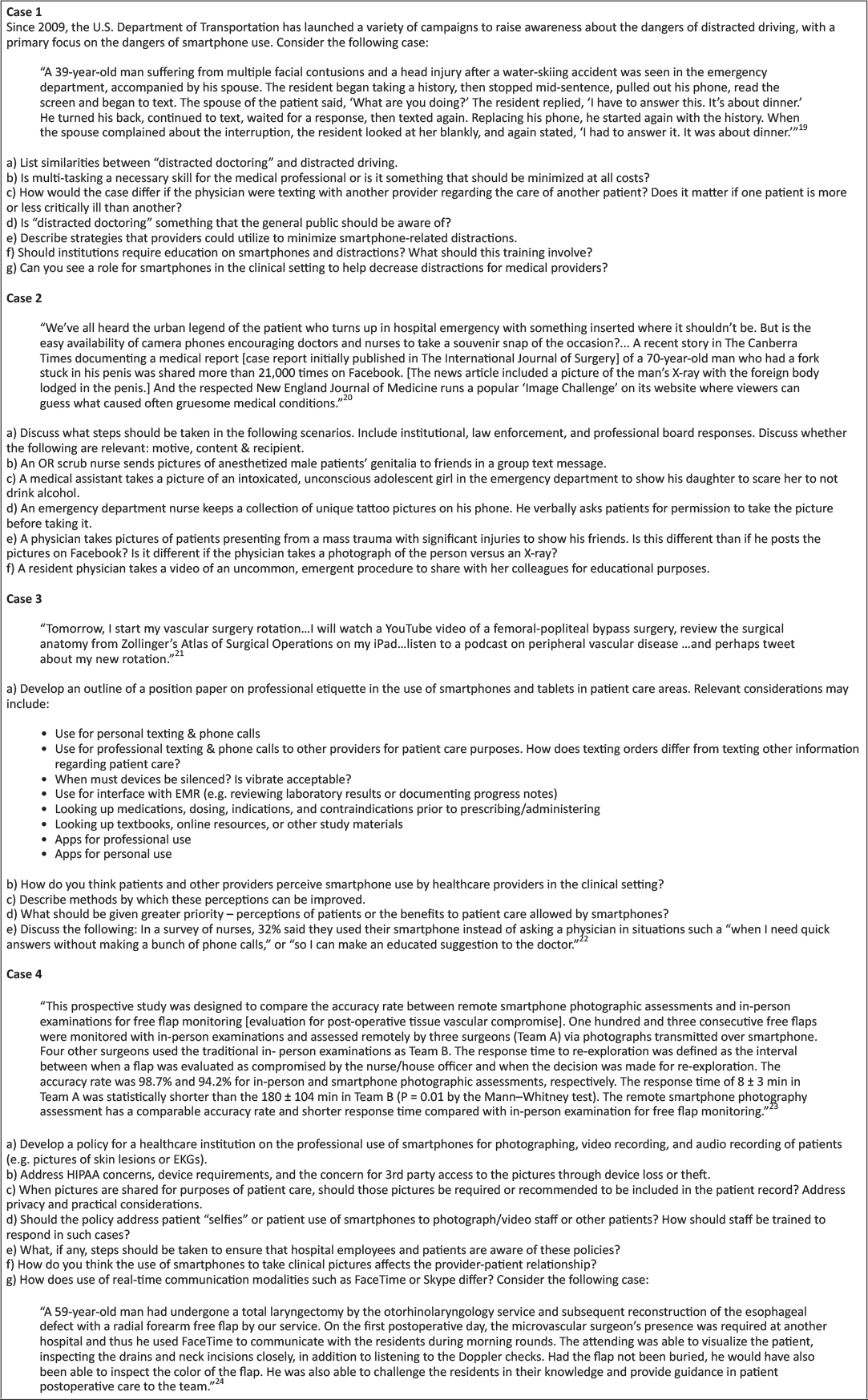

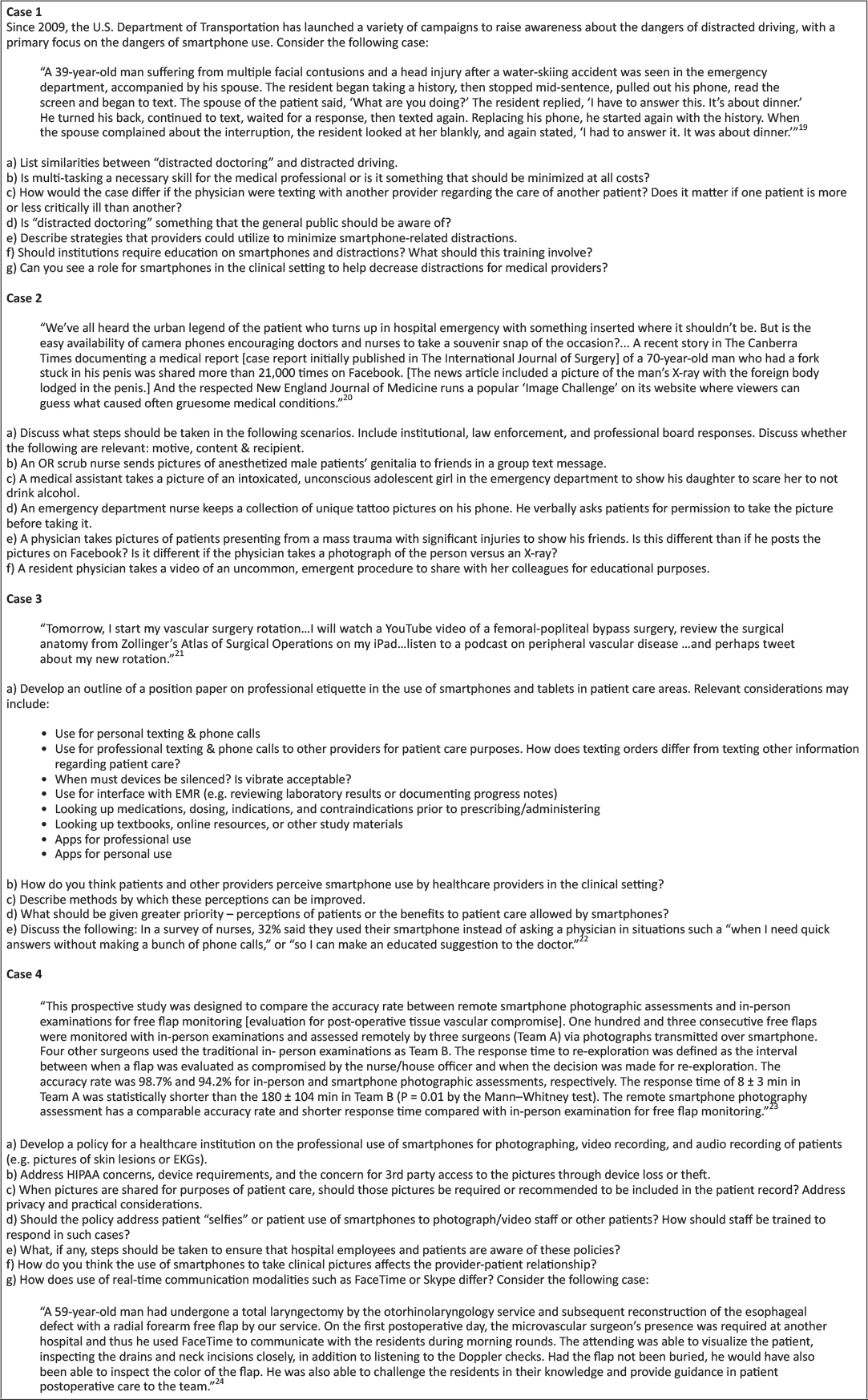

Methods

A roundtable workshop exploring issues of professionalism and smartphone use in the healthcare setting was held at The Academy for Professionalism in Health Care 2016 conference in Philadelphia, PA. Participants included physicians, nurses, medical students, dentists and academic researchers. Participant occupational settings included medical education, academic clinical practice, and private practice. Four case-based scenarios (Figure 1) were discussed in small focus groups and then presented to the entire workshop for further analysis. The results of these discussions were compiled into the following guidelines.

Figure 1: Case-based scenarios and questions

Results

Distraction and Smartphone Use

Smartphone use may result in provider distraction or so-called “distracted doctoring” and increase the risk for patient care errors. Smartphone use in the healthcare setting has the potential to result in distraction in a manner similar to driving. Use of smartphones may involve cognitive, visual, and/or manual tasks that divert the provider’s attention from their patient care responsibilities. As such, frequently repeated activities or procedures may increase the risk that providers will engage in distracting secondary tasks, such as smartphone use. “Bring Your Own Device” (BYOD) policies that require providers to utilize their personal devices for professional purposes may increase the risk of distraction due to non-professional phone calls, text messages, and app notifications. Further research is needed in the area of distracted doctoring and smartphone use.

Healthcare providers commonly hold the misperception that utilizing smartphones for multi-tasking in the healthcare setting improves efficiency and patient care. Professional and personal smartphone utilization in the healthcare setting increases the number of interruptions and the amount of information received and processed by providers while engaging in patient care. While multi-tasking is a necessary skill, it should be minimized when possible. Attentional shifts while multi-tasking interrupt the cognitive processing of information, situational awareness, and may increase the likelihood of patient care errors. Interruptions may be minimized by use of “silent”, “airplane”, and “do not disturb” modes, particularly during important patient care activities. The amount of information received by providers may be controlled through specialized ring or text message tones and disabling of app notifications.

Healthcare providers should be educated on the dangers of “distracted doctoring”. Individuals may overestimate their ability to engage in smartphone use without significant distraction. Education on distraction, generally, and specifically smartphone-associated distraction should be implemented at the undergraduate and graduate medical education levels and in patient-safety-related continuing education. Simulation exercises allow participants to experience the detrimental effects of distraction and develop skills for dynamic prioritization of incoming information.

Appropriate smartphone use may decrease distractions and should be encouraged. Smartphones may be utilized to reduce distractions from handheld pagers and overhead paging systems. Use of calendar functions, alarms, and notes apps may be used to reduce the cognitive load of “to-do lists”. Alarms for medication administration or other time-sensitive tasks may improve timeliness of administration. Smartphone information resources and clinical calculators reduce the need to interrupt current tasks to find a computer. Policies to utilize smartphones to reduce distractions should be considered at the institutional level.

Smartphone Photography in the Healthcare Setting

Smartphone photography is an advantageous learning and communication tool; however, respect for the patient and the patient’s privacy must be paramount. Smartphone photography has the potential to capture disease conditions and procedures that may otherwise be difficult or impossible to record and which may be used in educational materials or the peer-reviewed literature and therefore improve patient care on a global level. Smartphone photography may be used to transfer information about patients (e.g. lesion, electrocardiogram) to other providers, improving the clinical decision-making process and therefore improve patient care on the individual level. Pitfalls of photography in the healthcare setting include capture of patients while they are vulnerable or when they are unable to fully consent. Patients may perceive an element of coercion when asked to be photographed even if no direct coercive statement is made. The individual right to privacy, respect, and autonomy are paramount. Respect for privacy and autonomy as it pertains to smartphone photography should be taught at the undergraduate, graduate and continuing education levels. When institutional photography equipment is available, that equipment should be used in lieu of a smartphone.

Consent should be obtained at the time of image capture for the photograph, the intended use and any transmission. Consent should not be obtained at time of admission or triage for later photography. Consent for photography at that time contains an element of implied coercion and is too abstract to be considered informed consent. Informed consent should include the elements of the body part to be photographed, the intended use, and any transmission of the photograph. When possible, written consent should be obtained. If written consent is not possible, verbal consent should be witnessed and documented.

Photographs should be obtained in such a way as to minimize or eliminate the amount of protected health information that is captured. Photographs of the face are typically not necessary. Patient identifiers such as name, date of birth, and medical record numbers should not be included in photographs. Tattoos, piercings, skin conditions, and other unique identifiers compromise patient confidentiality and should be included only with explicit consent and when capture of those elements is required.

Smartphone photography in the healthcare setting for personal or entertainment purposes is inappropriate and should be avoided. Such photography contains too much potential for abuse to be acceptable. Unintended consequences include the inadvertent capture of protected health information or other patient identifiers. The content of seemingly innocuous photographs in the healthcare setting have the potential to distress patients, family members and others.

Healthcare providers have a duty to intervene in situations involving inappropriate smartphone photography. When possible, inappropriate photography should be prevented. If such photography has already occurred, appropriate interventions may include education, deletion of the photograph, or report at the institutional or law enforcement level depending on the scenario. Healthcare institutions should have protocols in place for reporting inappropriate smartphone photography with a well-defined chain of command and protections against retribution including the ability to anonymously report. Patients may similarly take inappropriate smartphone photographs in the healthcare setting and providers should intervene in those situations as well.

Smartphone etiquette and perceptions

Use of smartphones for personal calls, texting and social media apps is to be avoided in patient care areas. Personal use of smartphones in patient care areas may convey an informality or lack or professionalism to patients, their families, and other staff. Even when providers are not actively engaged in patient care activities, personal smartphone use should be avoided. Patients may perceive that aspects of their care are being adversely affected by personal smartphone use such as wait time, face time with providers, or attention to their complaints. Personal smartphone use may be permitted in staff lounge areas, provided that it does not adversely affect patient care.

Smartphones should be set to “silent”, “airplane”, or “do not disturb” modes during patient encounters. Vibrate modes are frequently audible and should not be utilized. App notifications should be turned off using one of the above-mentioned modes. Smartphone interruptions during sensitive discussions may be particularly distressing to patients. It is encouraged to remind colleagues to put their smartphone into one of the above-mentioned modes prior to such a discussion. Most healthcare providers do not need to be immediately available to colleagues. In the event that a healthcare provider must be immediately available during a patient encounter, the possibility of interruption should be communicated to the patient at the outset. The use of a “do not disturb” mode that permits calls from only pre-identified emergency contacts may reduce the risk of interruption and is recommended.

Patient permission should be obtained for professional smartphone utilization during patient care activities. As discussed above, smartphones are a resource for healthcare professionals, allowing increased communication with other providers, interface with EMR, clinical calculators, and immediate access to information resources (e.g. pharmacopeia). The inherent portability of smartphones over other electronic devices makes them particularly useful during patient care activities. However, explicit patient permission should be obtained prior to their utilization during patient care activities. Permission introduces the device into the patient-provider relationship and serves the dual purpose of informing the patient that the device is being used to facilitate their care and to confirm that use of the device will not be unduly distressing to the patient. Patients should be encouraged to ask their physicians what they are using their devices for to facilitate communication regarding this practice.