Pretesting mHealth: Implications for Campaigns among Underserved Patients

Disha Kumar, BS, BA1,2, Monisha Arya, MD, MPH3,4

1Rice University, 6100 Main Street, Houston, Texas 77005, USA; 2School of Medicine, Baylor College of Medicine, One Baylor Plaza, Houston, Texas 77030, USA; 3Department of Medicine, Section of Infectious Diseases and Section of Health Services Research, Baylor College of Medicine, One Baylor Plaza, Houston, Texas 77030, USA; 4Center for Innovations in Quality, Effectiveness and Safety, Michael E. DeBakey VA Medical Center 2002 Holcombe Blvd (Mailstop 152), Houston, Texas 77030, USA

Corresponding Author: disha.kumar@bcm.edu

Journal MTM 5:2:38–43, 2016

doi: 10.7309/jmtm.5.2.6

Background: For health campaigns, pretesting the channel of message delivery and process evaluation is important to eventual campaign effectiveness. We conducted a pilot study to pretest text messaging as a mHealth channel for traditionally underserved patients.

Aims: The primary objectives of the research were to assess 1) successful recruitment of these patients for a text message study and 2) whether recruited patients would engage in a process evaluation after receiving the text message.

Methods: Recruited patients were sent a text message and then called a few hours later to assess whether they had received, read, and remembered the sent text message.

Results: We approached twenty patients, of whom fifteen consented to participate. Of these consented participants, ten (67%) engaged in the process evaluation and eight (53%) were confirmed as receiving, reading, and remembering the text message.

Conclusion: We found that traditionally underserved and under-researched patients can be recruited to participate in a text message study, and that recruited patients would engage in a process evaluation after receiving the text message.

Introduction

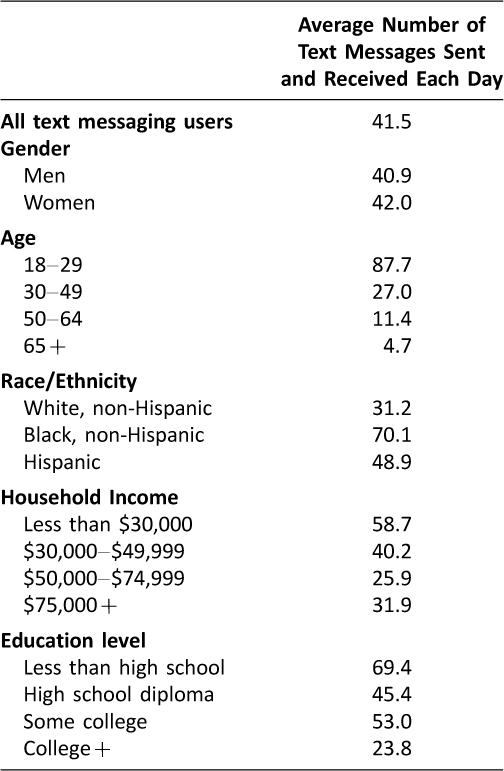

In the U.S., 90% of adults own a mobile phone and 81% of mobile phone owners use text messaging. Notably, Americans send or receive on average 41.5 texts each day,1 making text messaging a potent tool for communication. With the growing prevalence of text messaging, the uses of text messaging have broadened beyond everyday conversation. In fact, several research studies have already capitalized on the ubiquity and advantages of text messaging to encourage health behavior changes.2–7 Notably, text messaging can readily reach patients of demographics that are traditionally underserved: racial and ethnic minorities, low-income, and low education level. These demographics use text messaging more often compared to other demographics (Table 1).1

The NIH Making Health Communication Programs Work, commonly called “The Pink Book”, has emphasized the importance of pretesting messages to ensure that messages resonate with the target audience and can influence behavior change.8 Given the emergence of new media for health campaigns, pretesting the channel of message delivery is equally important. The Pink Book has also emphasized process evaluation; this step is often overlooked in health campaign design. Process evaluation assesses the effectiveness of project management and each step of campaign development.9

In preparation for a mHealth campaign, we conducted a pilot study to pretest a mHealth channel and pretest a process evaluation among a subset of predominately racial and ethnic, low-income patients. These populations are traditionally hard-to-reach and suffer from worse health outcomes.10 The primary objectives were to assess 1) successful recruitment of these patients for a text message study and 2) whether recruited patients would engage in a process evaluation after receiving the text message. A secondary objective of this study was to assess whether patients were able to remember the text message that we sent.

Methods

Setting and Participants

This study was conducted in February 2015 in a community clinic affiliated with the Baylor College of Medicine in Houston, Texas. The clinic serves a predominantly low-income population, many of whom are racial and ethnic minorities and do not have health insurance.11 Among the health system’s patients, 60% are Hispanic, 25% are African-American, 64% self-pay for their health care, 21% are on Medicaid and Children’s Health Insurance Program, and 10% are on Medicare.11 The study was approved by the Baylor College of Medicine Institutional Review Board.

Participants were eligible if they 1) were a patient at the clinic, 2) were 35–55 years of age, and 3) owned a mobile phone. The age group of the participants was selected using audience segmentation to ensure participant demographics corresponded with those for a future mHealth campaign. For recruitment, a research assistant approached patients in the waiting room of the study site.

During the consent process, participants were informed that the research team would send them a simple text message such as “Have a great day.” A research assistant would then call them to evaluate whether the participant received, read, and remembered the text message – the process evaluation. Participants verbally consented and a member of the research team noted their first name and phone number. Demographic information was not collected.

Table 1: Pew Research Report: The average number of text messages sent and received each day by demographic group (1)

Figure 1: Flow Diagram of Study. Participants who consented were texted and called to evaluate receipt, reading, and memory of the text message.

Text Message Delivery

The research team used Google Voice® to send the text messages.12 The Google email server, Gmail®, was used to access Google Voice®. A study Gmail® account was created and a Google Voice® phone number was selected. Google Voice® was chosen because a non-personal phone number given by Google could be used to send the text messages. Additionally, Google Voice® offers a free text message service. Google Voice® also allows the same text message to be sent to up to five participants at a time. Text messages can easily be sent from a computer and remain secure, only available to members of the research team with the username and password to the Google Voice® account. Participants were texted through the Google Voice® platform within 24 hours of consenting to participate. The message said, “Houston is a great city to live in! From Alex at Baylor College of Medicine.”

Process Evaluation

Process evaluation phone calls were conducted within three hours of sending the text message. The phone used to make the evaluation call was an office phone, and thus did not use the Google Voice® phone number. During the phone call, the research assistant verified that the person who answered the phone was the study participant. Then, the research assistant asked if the participant had received the text message. Of those who said yes, to confirm that the participant had actually received the text message, the research assistant asked if the message was either A) “Houston is a great city to live in! From Alex at Baylor College of Medicine.” or B) “The weather outside is lovely. From Alex at Baylor College of Medicine.” Participants who selected message “A” were designated as having received, read, and remembered the message.

Results

We approached twenty patients to participate, of whom fifteen consented to participate (75% response rate). Factors affecting the response rate included the eligibility requirement of English and the 35–55 year age range. Additionally, the clinic was busy during times of recruitment, limiting the amount of time patients had to talk to the research team. We texted all fifteen participants with message “A” and then called all participants. See Figure 1 for the study process. Of the fifteen participants who consented and were texted, 3 did not answer the phone, and one number was disconnected. The research team spoke to eleven participants, of which one person who answered said the research team had dialed the wrong phone number. Overall, ten participants (67%) retained in the study protocol and engaged in the process evaluation. Two participants said they had received and read the text message; however, they chose “B” – the incorrect text message – as the message we sent. We designated these two participants as not receiving, reading, or remembering the correct text message. Eight participants selected “A” as the text message in the process evaluation phone call. Therefore, of the ten participants who answered the process evaluation phone call, eight (80%) received, read, and remembered the text message. Of the total fifteen enrolled participants, eight (53%) were reachable by phone for the evaluation and confirmed as receiving, reading, and remembering the text message.

Discussion

Our study offers some insight into the process of implementing a text message campaign for traditionally hard-to-reach patient populations. Importantly, 75% of patients consented to participate in our study using text messaging and 67% were retained. Over half of the participants completed the entire study protocol and correctly identified the text message that was sent to them. While many mHealth studies look at behavior change outcomes and clinical outcomes, fewer studies explicitly measure the numerous steps that need to be successful in delivering and evaluating health messages via text. As noted in a review article by Fjeldsoe et al., few studies adequately analyze process outcomes13 as we attempted to do in this current study. These few studies often publish the process outcomes data hidden amidst behavior change and health outcome data, thus obscuring the ability to measure implementation of the text message intervention.

Some other outcomes evaluated in previous studies include study retention, text message responses, and text message viewing. The process outcomes of these studies vary from study to study and from our study, due to the different demographics of the participants and study design. Fjeldsoe et al., found that participant retention in text message studies varied from 43% to 100%.13 A study aimed at low-income children and adolescents found that 3027 of 17640 children and adolescents assessed for eligibility (17%) were excluded due to lack of a cell phone.3 Furthermore, text messages were undeliverable to 11% of parents, the phone number was incorrect for 1% of parents, and 4.5% parents dropped out of the study by declining further text messages.3 Similarly, another study aimed at low-income pregnant women excluded 172 patients out of 2106 (8%) due to lack of cell phone number.4 Additionally, 10 participants dropped out during the study by declining further text messages.4 Finally a text message study aimed at improving diabetes self-management found that 42 (95%) participants consented out of 44 recruited potential participants.14 Additionally, two (5%) participants requested to drop out of the study and three (7%) were lost to follow-up.14 In addition to participant retention, whether participants respond to actionable text messages has been studied. A pilot study evaluating text message reminders to improve adherence to antiretroviral therapy found that 48% of 7110 messages requesting a response were responded to by participants.15 Another study found that 74% of participants in the intervention group viewed at least half of text messages sent by the research team and 30% of participants viewed most or all of sent text messages.16 A study looking at whether people would pay for text messaging health reminders asked their participants to respond to three questions; three out of fifty-one participants (6%) did not answer the first question, two (4%) did not answer the second question, and zero did not answer the third question.5 While these studies described process outcomes that were obtained during text message interventions, the study goal of these studies was not to determine how these process outcomes can be improved. We hope to do that with the following.

While our study objectives were met, we note study design areas that could be improved for future mHealth campaigns. At the first drop-off point in the study, 75% of approached patients consented to participate. Although this is a high proportion, the sample size of the study could have been improved by approaching more patients. Additionally, recruitment and consent13 for minimal risk studies could be conducted via text message to improve study sample sizes. At the second drop-off point, several people did not answer the phone call. This may be because they did not recognize the research team’s phone number. It is also possible that the participant was busy and could not answer their phone. The phone call was made for the process evaluation. A more convenient method to communicate with participants for mHealth campaigns may be asking them to reply to the original text message. At the third drop-off point – patient recall of the text message – several factors may have influenced participants’ memory of the text message. It is possible that participants had not yet received or read the text message at the time of the phone call. Alternatively, due to social desirability bias, they may have guessed text message “A” or “B” to gratify the research assistant. Additionally, participants may not have remembered the text message because they had received too many texts that day. It is also possible that participants had seen the text message, but did not process the message to recall what it had said a few hours later. Notably, both text message choices had similar tones, which may have complicated the participant’s memory of the message. In addition, the time delay between the time of text and phone evaluation may have been too great. Finally, the participants who do not frequently receive text messages may not have been familiar with reading and remembering text messages.

To streamline a text message study protocol, we recommend the following measures. If possible, the same phone number should be used to both text and call participants. The research assistant should also read back to the participant the participant’s mobile phone number to ensure that the number was correctly recorded and no wrong numbers are called during the evaluation. Additionally, a research assistant should ask the participant to input the study phone number as a contact into their mobile phones. Finally, the research assistant should call participants at varying times and outside of business hours to ensure that participants are contacted at a time that is convenient for them.

The major limitation of this study is its small sample size. However, we wanted to prove feasibility of a text message study for traditionally underserved patients. Our results may not be extrapolated across the wider population. However, our study will be useful for researchers intending to evaluate the effectiveness of text messaging for health promotion among a target population of low-income patients. Notably, our relatively high retention rate of 67% was obtained in the setting of a very short (i.e., 1–2 days) study protocol. Text message studies of longer duration may find lower retention rates. An additional limitation is response bias in the evaluation phone call. Participants who answered “A” as the text message may have lied about receiving, reading, and remembering the text message and simply guessed the correct choice. Finally, some patients may have been reluctant to join the study because the benefit of receiving a generic, non-health-related text message may not have been clear to the patients. Studies that involve text messages with more relevant health messages may encourage greater participation.

Our findings thus contribute to the limited literature on process outcomes in developing mHealth campaigns. Further investigation on process outcomes of text message studies is needed, especially for traditionally underserved and under-researched patient populations who may have limited knowledge and use of mHealth. As a relatively new medium of communication in healthcare and research, text messaging should be further studied to ensure patients of differing demographics can receive, read, remember, and ultimately benefit from the text message.

Conclusions

Text messaging can be a potent research tool. This study’s findings support a future mHealth text message intervention for patients who are predominately racial and ethnic minorities and have low-income. We found that patients can be adequately recruited to participate in a text message study, and that recruited patients would engage in a process evaluation to confirm receipt of the text message.

Acknowledgements

This work was supported by the National Institute of Mental Health of the National Institutes of Health under Award Number K23MH094235 (PI: Arya). This work was supported in part by the Center for Innovations in Quality, Effectiveness and Safety (#CIN 13-413). The views expressed in this article are those of the authors and do not necessarily represent the views of the National Institutes of Health, the Department of Veterans Affairs, Rice University, or Baylor College of Medicine.

The authors would like to thank Dr. Thomas P. Giordano for his help in conceptualizing the study and Ms. Alexandra Trenary and Ms. Hannah Chen for their help in conducting this study. The authors would also like to thank Ms. Sajani Patel and Ms. Ashley Phillips for their thoughtful comments on the manuscript.

All authors have completed the Unified Competing Interest form at www.icmje.org/coi_disclosure.pdf (available on request from the corresponding author) and declare: M. Arya reports grant from the National Institutes of Health/National Institute of Mental Health; no financial relationships with any organisations that might have an interest in the submitted work in the previous 3 years; no other relationships or activities that could appear to have influenced the submitted work.

References

1. Smith A. Americans and text messaging. Pew Research Center’s Internet & American Life Project; 2011. Available at http://www.pewinternet.org/files/old-media//Files/Reports/2011/Americans%20and%20Text%20Messaging.pdf (accessed 1 Feb 2016).

2. Free C, Phillips G, Galli L, et al. The effectiveness of mobile-health technology-based health behaviour change or disease management interventions for health care consumers: a systematic review. PLoS Med 2013;10(1):e1001362. ![]()

3. Stockwell MS, Kharbanda EO, Martinez RA, et al. Effect of a text messaging intervention on influenza vaccination in an urban, low-income pediatric and adolescent population: a randomized controlled trial. JAMA 2012;307(16):1702–8. ![]()

4. Stockwell MS, Westhoff C, Kharbanda EO, et al. Influenza vaccine text message reminders for urban, low-income pregnant women: a randomized controlled trial. Am J Public Health 2014;104(Suppl 1):e7–12. ![]()

5. Cocosila M, Archer N, Yuan Y. Would people pay for text messaging health reminders? Telemed J E Health 2008;14(10):1091–5. ![]()

6. Cole-Lewis H, Kershaw T. Text messaging as a tool for behavior change in disease prevention and management. Epidemiol Rev 2010;32:56–69. ![]()

7. Lee HY, Koopmeiners JS, Rhee TG, et al. Mobile phone text messaging intervention for cervical cancer screening: changes in knowledge and behavior pre-post intervention. J Med Internet Res 2014;16(8):e196. ![]()

8. National Cancer Institute. Making Health Communication Programs Work. (National Institutes of Health). Available at http://www.cancer.gov/publications/health-communication/pink-book.pdf (accessed 1 Feb 2016).

9. Charles KA, Rice RE. Theory and Principles of Public Communication Campaigns. In: Charles KA, Rice RE., (Eds.) Public Communication Campaigns. Sage Publications, Inc. 2001:13.

10. U.S. Centers for Disease Control and Prevention. CDC Health Disparities and Inequalities Report – United States, 2013; 2013. Available at http://www.cdc.gov/mmwr/pdf/other/su6203.pdf (accessed 1 Feb 2016).

11. Harris Health System Facts and Figures. Harris Health System. Available at https://www.harrishealth.org/en/about-us/who-we-are/pages/statistics.aspx (accessed 1 Feb 2016).

12. Google Voice. 2015. Available at https://www.google.com/googlevoice/about.html (accessed 1 Feb 2016).

13. Fjeldsoe BS, Marshall AL, Miller YD. Behavior change interventions delivered by mobile telephone Short-Message Service. Am J Prev Med 2009;36(2). ![]()

14. Dobson R, Carter K, Cutfield R, et al. Diabetes Text-Message Self-Management Support Program (SMS4BG): A Pilot Study. JMIR mHealth uHealth 2015;3(1). ![]()

15. Dowshen N, Kuhns LM, Johnson A, Holoyda BJ, Garofalo R. Improving adherence to antiretroviral therapy for youth living with HIV/AIDS: a pilot study using personalized, interactive, daily text message reminders. J Med Internet Res 2012;14(2):e51. ![]()

16. Whittaker R, et al. MEMO – A mobile phone depression prevention intervention for adolescents: development process and postprogram findings on acceptability from a randomized controlled trial. J Med Internet Res 2012;14(1):e13. ![]()